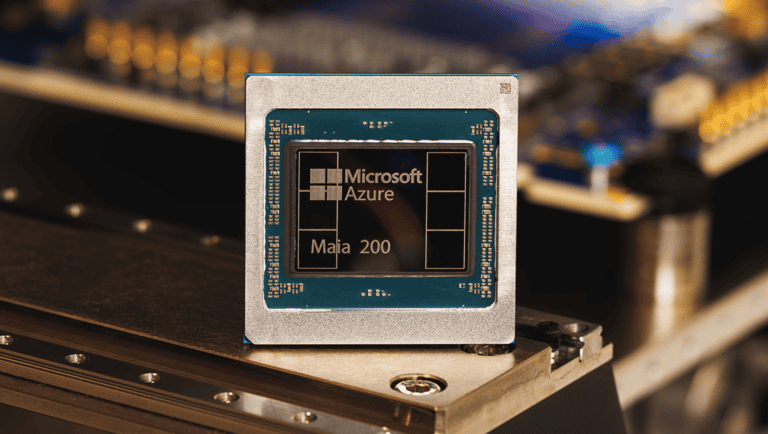

In the midst of the AI boom, one can easily forget Moore’s Law has lost its fight to physics. Thankfully, innovative chip designs are arriving almost as often as the state-of-the-art AI models meant to run on them. With Maia 200, Microsoft is seeking to make inferencing on its Azure cloud as cost-efficient as possible. However, in conversation with Andrew Wall, Microsoft’s General Manager of Azure Maia, we’re learning just how multifaceted and complex the future of AI compute may yet become. What’s behind this trend?

When OpenAI trained GPT-4 in early 2023, it needed around 25,000 Nvidia A100 GPUs. Each ran intermittently for over three months to massively upgrade ChatGPT beyond its original GPT-3.5 foundation. Training, however, is a finite exercise. Unlike training, inferencing is continuous. Microsoft Azure, which ran GPT-4, quickly required far more compute to keep ChatGPT running than it had to train it. The GPU, never purpose-built for the task, was simply used for inferencing in the early days of ChatGPT as the best available, most widely accessible option.

As inference demand compounded, the gap between “best available” and “actually optimal” grew wide enough to justify building AI accelerators purely focused on inferencing. The contrast with early 2023, when GPUs were effectively the only option for GenAI workloads, is stark. Nowadays, you’d perhaps only need around 5,000 Maia 200 accelerators to accomplish GPT-4’s original training task at the same rate. And rather strikingly, Maia 200 isn’t even intended for training. Instead, Microsoft is setting out to deliver efficient AI inferencing to run models in the cloud at a low cost.

AI inferencing becomes multifaceted

The point is clear: not only has raw AI computing power dramatically improved, it has become far more mature. Andrew Wall, GM of Azure Maia since those early days of ChatGPT (notably, originally born on Azure), explains why Maia 200 can’t be described as a straight-forward AI inferencing upgrade. Try as one might to focus on its 216 GB of HBM3e or 7 terabytes a second of memory bandwidth, we’re not grasping the complete Azure-Maia proposition. Organizations running AI workloads, according to Wall, won’t often be thinking of running on Maia 200 specifically. Azure instead focuses on application layers that offload the need to pick a given chip, targeting hardware best suited for a given AI workload.

Microsoft Azure will therefore serve up Maia 200 as an AI inferencing TCO boost underneath abstraction layers. Users may very well still run AI models and specific AI tasks on other chips, such as GPUs. But not every workload behaves in quite the same way, and choices around enterprise data and model selection determine which chip is best suited for the task at hand. We’ve seen AWS and Cerebras recently team up to even split up AI inferencing into its constituent prefill and decode tasks. In that scenario, Trainium 3 spins up to calculate the model’s KV cache based on the input, with Cerebras’ CS-3 generating the eventual output.

Wall explains that Maia 200 sits somewhere in the middle of a generalized parallel processor like a GPU and a specialized chip such as Cerebras’ CS-3 and Groq’s Language-Processing Unit (LPU). This, according to Wall, allows Microsoft to heavily accelerate known, critical elements of AI workloads while preserving enough general-purpose capabilities to handle the great unknown of future AI tasks.

This is risky, as it will not directly challenge GPUs for AI training or be maximally efficient for current LLMs. Then again, who could have told you about the exact architectures of LLMs circa 2026, especially given the secrecy around them by vendors such as OpenAI and Anthropic?

The great unknown

Speaking in enormously general terms, a chip goes from its initial design stage to rollout anywhere from 18 to 36 months. As a result, you can’t dream up complex AI accelerators for 2026 in 2025. Instead, you need to anticipate the industry’s trajectory, the movement of workloads, and build in some wiggle room. This is why Microsoft’s middle-of-the-road choice for Maia 200 was an intentional strategy, ensuring its 2026 arrival fulfilled a clear enterprise need. In Wall’s description, his team “threads the needle” of AI hardware development continuously. Maia 200 is part of a heterogenous AI infrastructure and will run multiple models, including OpenAI’s current-day descendant of GPT-4, GPT-5.2.

Some specs highlight Maia 200’s inferencing-geared approach. Microsoft’s choice of SRAM allocation, one of the critical decisions for AI chips, is bold. 272 MB of hyper-fast on-die cache exceeds even Nvidia’s currently deployed training-focused Blackwell GPU at 192 MB. Put simply, it puts more data needed for the calculations close to the compute side of the chip. This means you get far fewer cache misses, resulting in faster token outputs, meaning your AI model runs as swiftly as possible. If the relevant data isn’t available in cache, a sizable 216GB of HBM3e memory is available, capable of storing most AI models entirely on one chip. These specs end up avoiding latency-heavy round trips to external memory and storage, key to minimizing the time to generate tokens. Microsoft intentionally invests ahead of the curve here to stay on top of latency-sensitive workloads.

Developers may wish to access Azure’s deeper layers to make all these specs relevant. Bare-metal access runs on the NPL programming language, though most will communicate with Maia via its Triton Compiler or PyTorch available through the SDK. The functionality is currently available in preview. The accessibility of these tools matters a lot, as no competitor to Nvidia has yet built a software ecosystem to rival CUDA. That gap has buried more promising hardware than most care to remember.

Microsoft’s answer is to enable flexibility. Its SDK approach is intended to produce additional opportunities for optimization for power users looking to go deeper. If the abstraction layers do their job, most developers will never need to think about the silicon underneath at all, however. Whether that bet pays off will determine whether Maia 200’s hardware ambitions translate into something developers actually adopt.

A fragmented future

Maia 200 will predictably find a successor in Maia 300, which in due time will be replaced by Maia 400. Microsoft’s roadmap puts Maia 300 somewhere in 2027. Still, Wall tells us he expects Maia 200 to have a useful lifespan of around 4 or 5 years. This, if proven to be true, offers some comfort to those questioning the rapidity of AI hardware development. With timelines compressing over at Nvidia and AMD, one wonders when organizations can simply get down to a predictable cadence of AI-infused upgrades. Why tweak for AI hardware that will inevitably be replaced in short order? We aren’t so sure about the viability of today’s chips in half a decade, given this fluidity, but Microsoft suggests it is.

Wall thinks insights into the inner workings of AI models have allowed his team to extend the usefulness of Azure Maia. Microsoft is relatively unique in this respect. Aside from Google, no other tech company is so heavily focused on both the AI hardware itself as well as the models to run on them. Microsoft is trending upwards in both areas, even if it has a long way to go. Going beyond the models OpenAI provides, other vendors now also feature on Azure. Microsoft additionally features a Mustafa Suleyman-led AI group that will develop LLMs. As discussed so far, it clearly also has a mature approach to hardware. Acting on these two fronts is set to bring its advantages.

Wall considers the co-development of chips and models a key benefit. By working directly with this group as well as liaising with AI labs, hardware engineers can tune the silicon as the AI model’s internal levers change. This integrated approach allows them to balance resources on the SoC and unlock new capabilities that wouldn’t be possible if they simply treated off-the-shelf models as a “black box”.

Conclusion: complexity awaits

Beyond the silicon, we expect the heterogenous architecture of AI’s future to become an unseen frontier. With existing hardware, massive improvements may be possible just by tweaking how AI workloads are split across the silicon on offer. Microsoft Maia 200 is set to have its time in the sun in 2026 as a low-TCO inferencing option, even if most business users won’t ever notice this fact beyond the bottom line.

At any rate, chips aren’t supposed to be front-facing. Nevertheless, Wall expects plenty of headline-grabbing developments. Industry veterans will already be well-acquainted with them, as the likes of TSMC, ASML and imec have had variations of these technologies on their roadmaps for years or more. Examples include chiplets, complicated 3D-stacked memory dies and silicon photonics. Wall thinks that Moore’s Law may still be challenged by “step function improvements” in these areas. Future chip designs, he thinks, will strategically apply new technologies to specific IP blocks (zones, essentially) within the silicon to find enormous gains.

Beyond that fact, the future of AI compute looks like it’ll be enormously complex. Abstraction layers will need to do a lot of heavy lifting to get workloads both consistent as well as flexible. Workload routing, latency budgeting and cost optimization are set to be levers for AI computing for a long time to come. Microsoft is betting on these not just to deliver low TCO now, but also to make the public cloud the most viable foundation for optimized AI workloads in the years ahead. Running an AI model may no longer be a monolithic operation, provided you have the hardware to route your workloads to. If Microsoft succeeds in popularizing this (and it’s getting some help from industry rivals such as AWS and Cerebras), models may well be designed to benefit from this disaggregated setup. The interplay will likely continue for some time, and it will help decide where your AI should ideally run.

Ultimately, what’s most exciting about the future of AI compute is its multitude of possibilities at present. We simply don’t know what the AI architecture of the medium-to-long-term future will look like. CPUs once came to dominate other specialized pieces of silicon geared for individual tasks. The GPU challenged that paradigm and now, all sorts of [X]PUs define various processors that all run AI in some form. One thing is clear: the business user will only want to see the results, both in terms of monetary costs and model effectiveness. In the former space, Wall’s team appears confident Microsoft is well-prepared for the demands of today.

Also read: Cerebras partnership breathes new life into AWS Trainium