Cisco and Nvidia today announced a major expansion and deepening of the Cisco Secure AI Factory with NVIDIA. The goal is to offer customers a complete, well-integrated, and secure AI stack. This should make it easier for those customers to get on board with AI.

Last year at Nvidia GTC, Cisco announced the Cisco Secure AI Factory with NVIDIA. In the twelve months that followed, several expansions were introduced. One of the main additions was the launch of N9100 switches featuring Nvidia’s Spectrum-X Ethernet chips. Today marks the first major update to this AI stack. We spoke with Kevin Wollenweber, SVP & GM of Data Center and Internet Infrastructure at Cisco, shortly before the announcement.

The path to full-stack

If you look at some of Cisco’s announcements over the past year and a half, it’s clear where the company is heading when it comes to (AI) infrastructure. It’s all about full-stack. Offering customers a stack that’s as complete as possible. They can then get started quickly and effectively on whatever they want to do. Of course, it also ensures that Cisco can close great deals, but that makes sense.

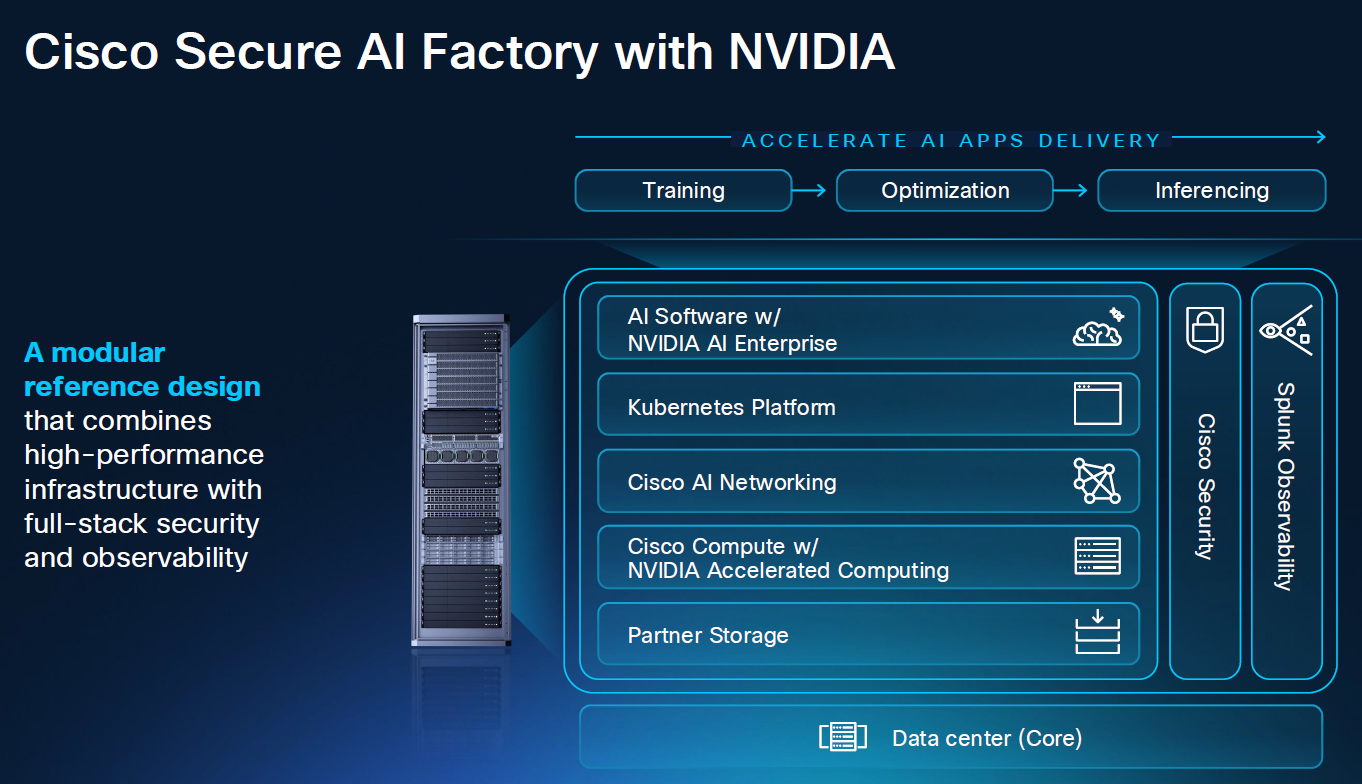

There are plenty of examples of the developments mentioned above. Consider, for instance, the Unified Edge and Unified Branch offerings. You can view the announcement of the AI PODs as the starting point of Cisco’s full-stack approach for AI infrastructure. These are built according to the principles of Cisco Validated Designs. These place extra emphasis on the optimal collaboration of the components that together form the stack. AI POD uses Nvidia AI Enterprise software too.

From AI POD to Cisco Secure AI Factory with NVIDIA

However, AI POD was certainly not the end of the development of a full-stack AI offering and the role that NVIDIA plays in it. You can view AI POD as the foundation for the first generation of the Cisco Secure AI Factory with NVIDIA. In that initiative, the collaboration between Cisco and NVIDIA was already much closer, but it is clear to us that this was the next step in the path Cisco had mapped out.

Another notable aspect of the launch of the Secure AI Factory with NVIDIA last year was that Cisco specifically emphasized security as a differentiating factor. After all, all other “traditional” infrastructure players had—and still have—partnerships with Nvidia to jointly deliver hardware and software to customers. So Cisco wanted to make it clear that the stack it built together with Nvidia is not like the rest. Among other things, AI Defense is a key focus in its own security narrative surrounding AI. With this, the Cisco Secure AI Factory with NVIDIA was officially born.

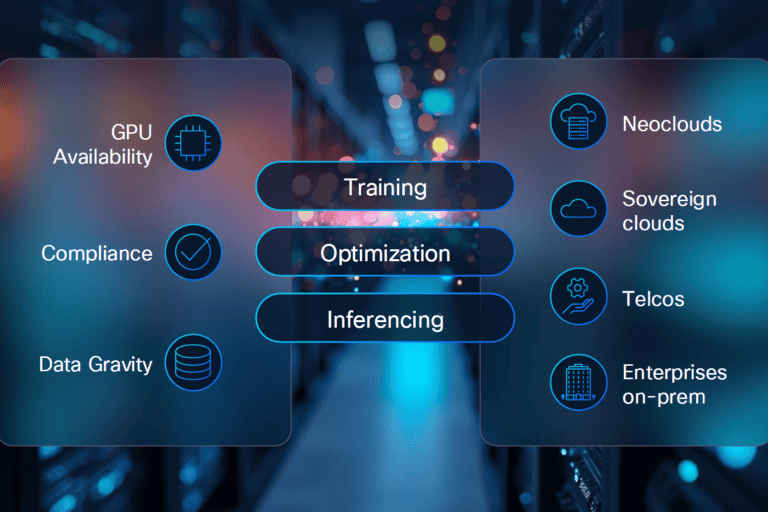

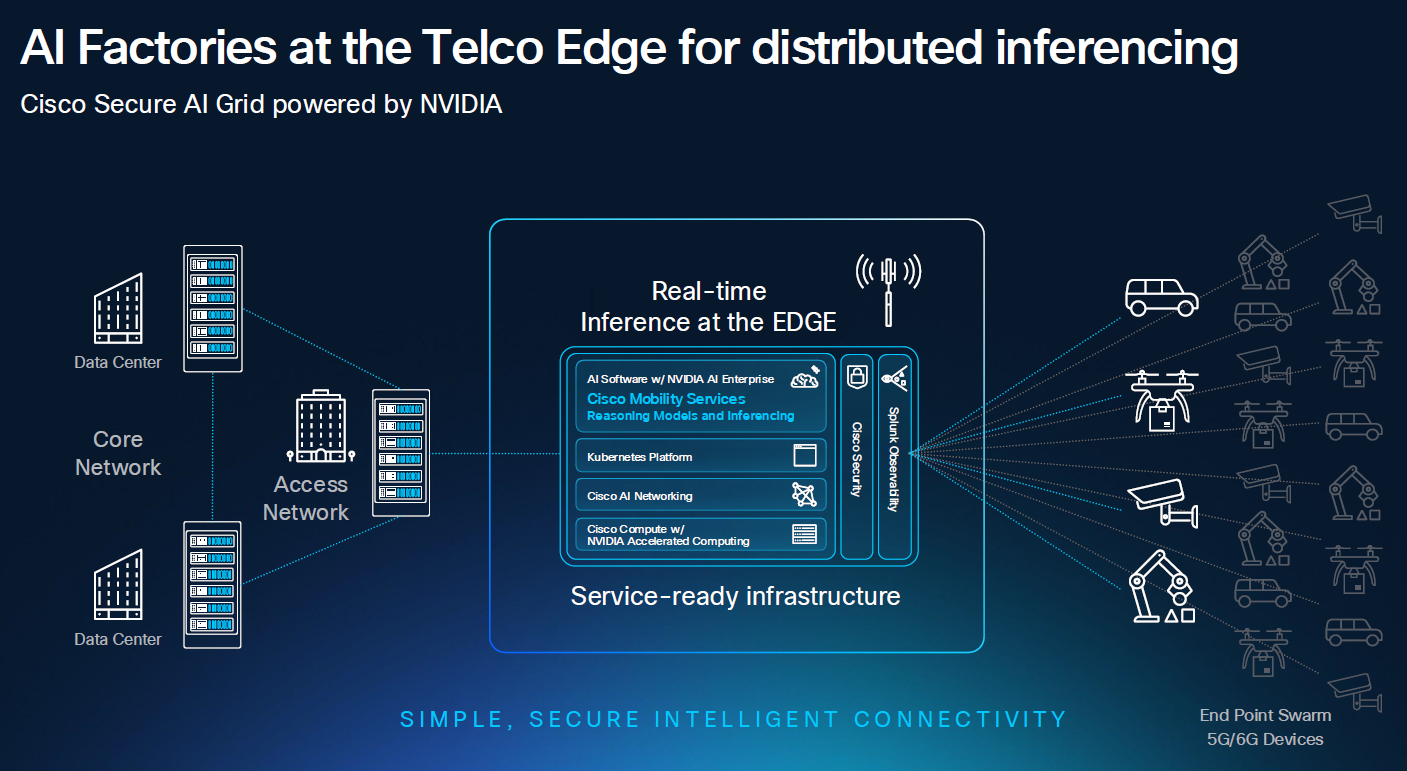

Inferencing requires expansion to the edge

The first generation of the Cisco Secure AI Factory with NVIDIA focused exclusively on the core of the infrastructure—that is, on organizations’ large data centers. However, with the (anticipated) rise of inferencing, an expansion was needed, Wollenweber explains. Not so much in terms of performance, but rather in terms of where the offering is available. “We had to enhance the infrastructure stack we’ve been building,” he tells us. That’s why the Cisco Secure AI Factory with NVIDIA no longer covers just the core data centers, but also includes the edge.

To remain relevant for edge environments, Cisco has added support to the Cisco Secure AI Factory with NVIDIA for NVIDIA’s RTX Pro 4500 Blackwell Server Edition GPUs. This means that Cisco UCS and the Unified Edge portfolio will support these GPUs.

When we talk about the edge, we can’t ignore the service provider’s edge. Mobile connections inevitably come into play there as well. Or, to put it in Wollenweber’s words: “Telcos own the last mile.”

To extend the full-stack approach there as well, the company is introducing Cisco AI Grid with NVIDIA. This is a reference design in which Cisco combines the Mobility Services Platform with NVIDIA RTX Pro Blackwell Series GPUs. The idea is that, thanks to this addition, telcos can offer services via their own networks for AI applications running at the edge. In this way, Cisco also brings that part of the distributed AI infrastructure into the Secure AI Factory offering.

This telco edge story is not just a hypothetical, Wollenweber says. In fact, a very big telco will be the launching partner of this part of Cisco’s AI-stack story. He is not allowed to tell us which telco it is, because that embargo will lift tomorrow.

More choice in hardware

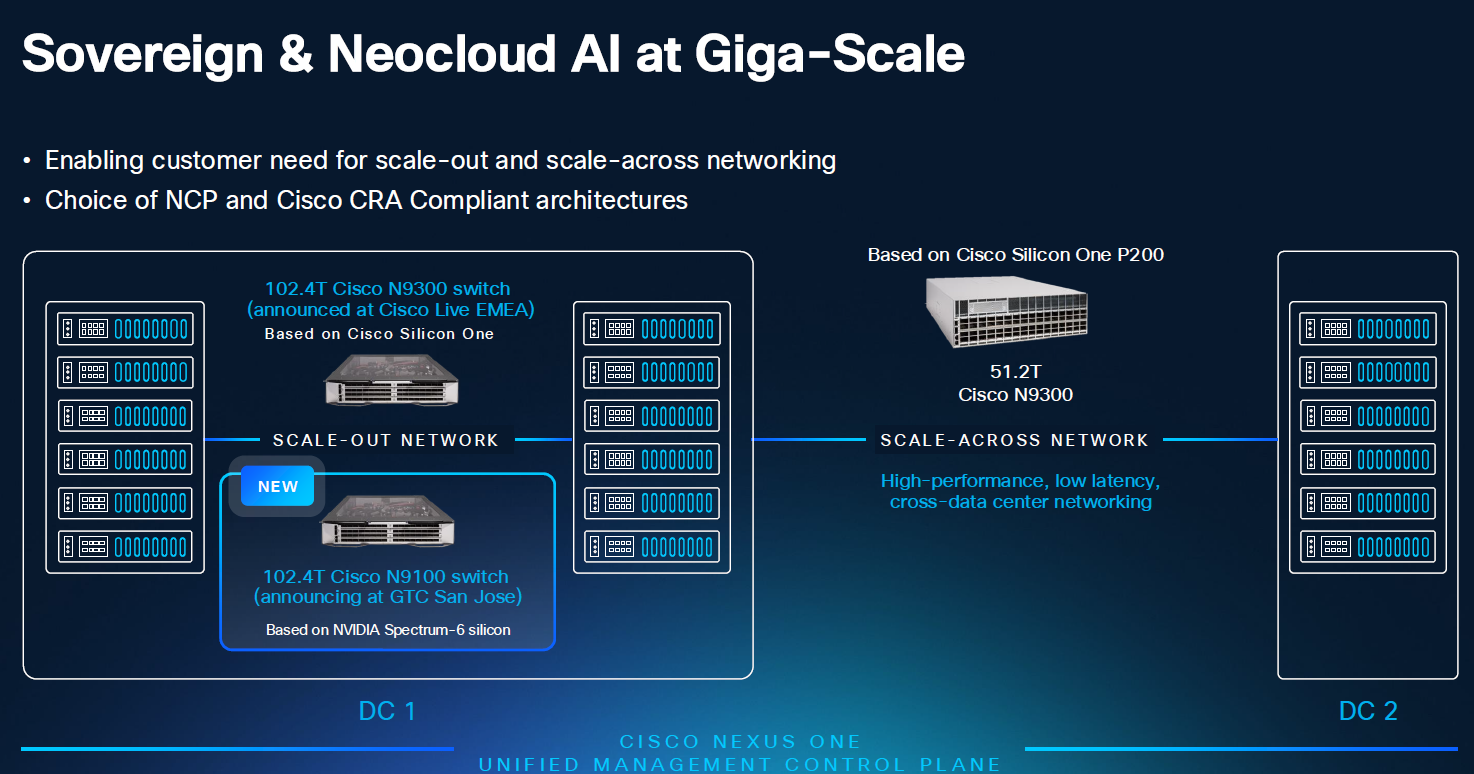

While the Cisco Secure AI Factory with NVIDIA may be a full-stack offering, this does not mean every organization has to choose the same hardware. For example, there was already the Cisco N9100 switch, which features an NVIDIA Spectrum-4 Ethernet chip under the hood. As soon as NVIDIA ships it, support will also be added for the Spectrum-6 Ethernet chip. Like the recently announced Cisco Silicon One G300 chip, it can process 102.4 Tbps.

These switches will also be supported within Cisco Nexus One and Cisco Nexus Hyperfabric, which is now also part of Nexus One. Hyperfabric is the complete AI stack that Cisco developed several years ago in collaboration with Nvidia, as well as with VAST Data (which provides the data platform for this product), among others. Nvidia Spectrum-X-based switches are now also becoming available within Nexus Hyperfabric.

The fact that Nexus One can now manage the entire AI infrastructure is something Wollenweber would like to highlight. “Agents are also running on Nvidia DPUs, across the entire compute layer and within the AI NICs themselves,” he notes. In this way, the AI stack is not merely a collection of SKUs on a Cisco GPL (Global Price List)—which, in addition to Nvidia hardware, also includes Red Hat AI software and the VAST Data platform—but involves actual, deeper integration between the various components.

The challenge for Cisco is to offer customers a reasonably closed, complete stack on the one hand, while also providing choices on the other. In this case, customers can choose to purchase an AI Factory that is fully compliant with the Nvidia Cloud Partner (NCP) program. Alternatively, they can opt for a Silicon One-based variant built according to the same design principles.

Security as an extra layer

The Cisco Secure AI Factory with NVIDIA includes the word “secure” in its title for good reason. It was undoubtedly added because the marketing department requested it, but fortunately, there’s more to it than that.

According to Wollenweber, it’s not surprising that AI raises many questions regarding security. Especially when it comes to AI Agents, “agentic identity, policies, and security are becoming increasingly important. If you have agents performing tasks on your behalf, they use your credentials and identity.” If you stop to think about that for a second, it’s highly desirable to secure this properly, is what he means by that.

As previously mentioned, Cisco has made a strong commitment to securing AI in recent years. In that regard, AI Defense was a very significant announcement. Specifically for AI Agents, this will now be integrated with Nvidia NeMo Guardrails, a component of the previously mentioned Nvidia AI Enterprise software. This should enable agents working at the edge to securely communicate with agents operating at the core of the AI infrastructure (in the data center).

Finally, Cisco is also expanding the scope of the Hybrid Mesh Firewall within the Cisco Secure AI Factory with NVIDIA. Specifically, this involves an expansion toward NVIDIA’s BlueField DPUs. These are found in the company’s GPU servers and thus also in the fabrics managed by Cisco Nexus One. With this integration, it is possible to enforce specific policies from the Hybrid Mesh Firewall on the DPUs. “So we’re extending security technologies into the NVIDIA stack,” Wollenweber summarizes. This is undoubtedly important, as it allows Cisco to create a security layer that directly covers an increasingly larger portion of the AI stack.

More integration, more security, more simplicity

Cisco’s goal with the Secure AI Factory with NVIDIA is clear. It aims to attract customers who want to run AI workloads but want little to no involvement with the underlying infrastructure through a full-stack approach. By adding an extra layer of security on top of this, it also seeks to further convince customers of the benefits of the offering in this regard.

Ultimately, it’s all about integration between the individual components of the stack and the various locations where training and inference take place. A well-integrated stack is, in theory, easier to secure because you can manage SecOps centrally. That’s definitely an advantage. Additionally, it should also be much easier for customers to get started with it. In any case, the infrastructure itself no longer needs to be pieced together manually. It is built according to a validated design, so it should always work.

Whether all these benefits will immediately lead to widespread adoption of the Cisco Secure AI Factory with NVIDIA remains to be seen, of course. To a certain extent, this full-stack approach is a little bit ahead of where the market currently stands. Certainly, the expansion toward the edge, and thus toward inference, may still be a bit premature for quite a few organizations.

Will we see more adoption?

In conversation with us, Wollenweber notes that predicting AI infrastructure adoption is sometimes like reading tea leaves. “We thought enterprise organizations would purchase much more GPU-based compute for inference, but we didn’t see the adoption we had expected,” he reflects on recent years. Much of it went through neoclouds and cloud providers, rather than through their own infrastructure.

This may well have happened because AI infrastructure players like Cisco hadn’t quite got their act together yet. In other words, it was all still too complicated for organizations to handle on their own. It’s also possible that the workloads simply weren’t there yet.

Sovereignty could very well give the AI infrastructure envisioned by Cisco and NVIDIA, such as the Cisco Secure AI Factory with NVIDIA, a boost, provided the use cases and workloads are there, of course. “There’s a strong push to build large sovereign data centers,” Wollenweber observes. “This [the Cisco Secure AI Factory with NVIDIA, ed.] makes sense for that,” he continues. It helps that Cisco has made a major pivot in recent years. According to him, it has largely rebuilt its on-premises stack. Customers can also choose between cloud and on-premises for management.

All in all, the Cisco Secure AI Factory with NVIDIA is a major next step for Cisco’s full-stack approach to AI infrastructure. It makes building an AI infrastructure much more doable for many organizations, at least in terms of deploying it quickly and relatively easily. Making it tightly integrated on the one hand, but also partly modular on the other makes it an approach to consider for many organizations looking for something like this. Adding the security layer is a smart move too. That takes care of at least part of the huge challenge companies face when it comes to AI.