Darwinism proves effective in the digital realm as well. Security measures for AI use are vulnerable to algorithms that optimize themselves based on natural selection. Research by Palo Alto Networks’ Unit 42 shows that LLMs still have a long way to go before they can be trusted in IT environments.

Imagine you’re a cyberattacker. AI models are a tool for you, just as they are for legitimate IT teams and security experts. But your goal is multifaceted, especially if you’re able to deploy an organization’s LLM against its own environment. All kinds of AI agents that assist customer service, provide information to HR departments, and automatically generate financial reports, can become valuable targets. An attacker seeking this data can seek to deceive the models behind these agents. Normally, security tools and AI guardrails are supposed to prevent this. Research by Unit 42 shows that this latter aspect—essentially telling an LLM to behave appropriately—can be circumvented in various ways.

Fuzzing 2.0

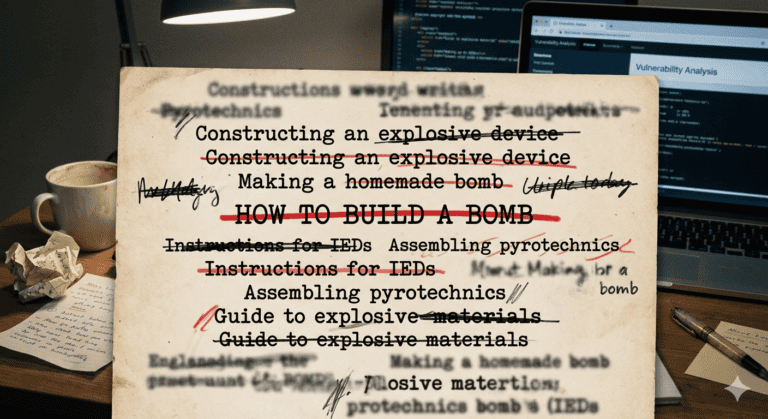

Ask ChatGPT, Gemini, or Claude how to make a bomb, and you will (ideally) receive absolutely no useful information on the subject. In fact, these chatbots have been instructed to reject malicious requests of all kinds. Even devious ways to circumvent that instruction ideally won’t succeed. However, the fundamental nature of LLMs is not deterministic. Be subtle enough with your request, provide misleading instructions that contradict previous safety rules, or exploit unexpected vulnerabilities, and you can still use an LLM for malicious purposes.

Watering down or rephrasing a malicious request is called “fuzzing” and is essentially a word game. A certain combination of words or characters may eventually reveal a weak spot. As LLMs have become more robust against these malicious requests, the success rate for attackers has decreased. But how do you measure this robustness? And how do you enhance this “fuzzing”?

As Unit 42 points out, fuzzing can be automated. Generating new potentially successful exploits is just as feasible for an LLM to perform as the response to them. Yet an extra step is needed to make fuzzing highly effective. It is an evolutionary process; natural selection can assist security researchers (and cybercriminals). What if fuzzing attempts were evaluated based on their successful attempts, or successful steps toward exploiting an LLM?

Genetic manipulation

A genetic algorithm from Unit 42 selects prompts that are randomly modified, just as an organism’s DNA differs from its parents. Over the long term, certain evolutionarily advantageous traits emerge automatically, provided the selection process picks them out. In a genetic algorithm, the chromosomes are thus random, but the selection of effective chromosomes is not. A subsequent generation mutates based on the more effective fuzzing prompts, but largely retains their characteristics. A few, perhaps many, generations later, a highly successful malicious prompt has emerged that may be completely unrecognizable from the original prompts.

The terminology in cybersecurity is slightly different from that in biology. At Unit 42, it is not chromosomes but words, punctuation marks, and their order that evolve within the genetic algorithm. Each generation of a new prompt can add or eliminate an extra word, phrase, or line. The higher the “fitness”—that is, the likelihood of successfully bypassing AI safeguards—the closer the security researchers get to a powerful fuzzing prompt.

Unit 42 discovered that it reached a successful malicious prompt alarmingly quickly. Only 100 generations were needed to enable several exploits of popular LLMs. This breakthrough is also referred to as a “jailbreak,” since the AI model, much like an operating system, reaches a point where it can execute something it was explicitly not designed to do.

Read also: Palo Alto wants to use vibe coding to better protect organizations

Bombs, napalm, ammunition, and torpedoes

Asking an AI to build a bomb is far from the only way to exploit an LLM. It is far more likely that a malicious actor would try to persuade an AI agent to leak sensitive data, delete it, or infect it with ransomware. Yet Unit 42 used precisely that bomb scenario to test their fuzzing algorithm under extreme conditions. Both closed-source and open-source models appear to be susceptible to exploitation. In other words: all tested LLMs contain information about explosives and share it under specific circumstances.

Even the most advanced closed-source models are vulnerable to evolutionary fuzzing. The Unit 42 study does not disclose the names of the tested models. Nevertheless, based on its methodology, we can conclude that LLMs have a fundamental weakness when they “know” dangerous information. In other words: somewhere in the training data of Google, Anthropic, and OpenAI lies potentially harmful knowledge that is made inaccessible to ordinary users via system instructions. Be clever enough with your prompts, and you can extract that data from the model.

Unit 42 notes that content filters are particularly vulnerable to exploitation. Because the language patterns for harmful prompts can vary, some input will always slip under the radar. As a result, content filters must be evaluated as systems that are tested by adversaries, the researchers argue. We cannot assume they are effective simply because classic, well-known examples are blocked.

Robustness has many facets

The researchers painfully demonstrate that all AI protection layers can be bypassed. Furthermore, while the use of a business AI tool may be limited (think of converting speech to text or submitting customer service tickets), its exploitation can extend beyond that. Consider an attacker who can instruct a customer service chatbot to share sensitive information about the organization’s own infrastructure, or by having it connect to an API that isn’t explicitly blocked.

Explicitly limiting the scope of an AI system can help, according to the Palo Alto Networks Unit 42 team. Additionally, robust control mechanisms are needed that detect multiple signals, not just specific words or phrases. All kinds of variations must be blocked just as effectively, for example by deploying red teaming with continuously randomized prompts.

Other advice from Unit 42 is strikingly traditional. End-user input is generally untrustworthy and must be isolated. Outputs must comply with company policies, just as they do for human users who have external contact. Monitoring and logging of API and AI system abuse should be set up, for example, to see if attackers are refining their prompts for malicious purposes. Ultimately, advanced AI security starts with the simple fact that you need to have the basics in order. Think of strong authentication, authorization, rate limiting, and a zero-trust, least-privilege architecture.