Google has announced that applications and workloads for data engineering and machine learning applications within the Apache Spark framework can now run within Kubernetes clusters. The Spark Operator tool should make this possible.

With the announcement of the beta release of the Spark Operator service, the tech giant wants to make it possible for end users to get more out of the Apache Spark platform, certainly in combination with Kubernetes clusters.

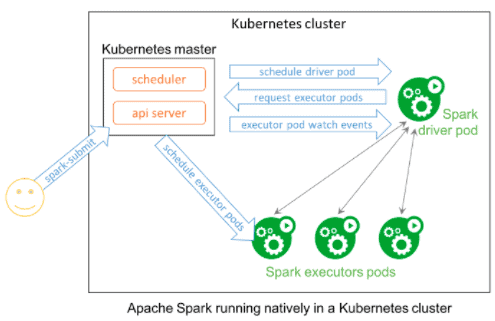

The now launched Spark Operator application is a Kubernet-based controller that uses custom resources for declarative specification of Spark applications. For example, the application supports automatic restart and cron-based, scheduled applications. Furthermore, developers, data engineers and data scientists can create declarative specifications describing their Spark applications and use native Kubernetes tooling, such as Kubectl, to manage their applications.

Exit Mesos and Hadoop

A notable development is that the new tool can facilitate the use of Apache Spark on-premise and cloud-based Hadoop services such as Azure HDInsight, Amazon EMR and Google Cloud Dataproc or Mesos clusters.

Running Spark workloads within a Kubernetes cluster (k8s) used to be a tough job, especially if end users wanted to run these workloads within a k8s without Mesos and without added Hadoop YARN strings. Although earlier versions of Spark slowly developed this application, its final implementation remained a difficult process.

With the arrival of Spark Operator, these workloads can now simply run native within Kubernetes clusters. This allows them to ultimately roll out these clusters as easily as they would with any Spark instance.

Available through GCP Marketplace

Spark Operator is now directly available through the Google Cloud Platform (GCP) Marketplace for Kubernetes. The tool is available as Google Click to Deploy containers for easy deployment to the Google Kubernetes Engine (GKE). Since Spark Operator is open source and can be rolled out to any Kubernetes environment, GitHub Helm charts are available for installation instructions.

The service is only available for the use of Kubernetes via GCP. It is not known whether the tool will also be available for the Kubernetes services Azure Kubernetes Service/AKS from Microsoft or Elastic Container Service/EKS from AWS.

This news article was automatically translated from Dutch to give Techzine.eu a head start. All news articles after September 1, 2019 are written in native English and NOT translated. All our background stories are written in native English as well. For more information read our launch article.