Oracle has added new functionality to its Container Engine for Kubernetes.

The enhancements are intended for customers who want to build and run cloud-based applications on OCI (Oracle Cloud Infrastructure), for example, as microservices and using flexible DevOps technology.

The container orchestration platform Kubernetes is very suitable for this purpose, but, according to Oracle, often still complex to work with. Moreover, it often lacks suitable employees who can perform the work.

The Oracle Container Engine for Kubernetes supports companies with large-scale Kubernetes environments. The new features should help better manage large environments, increase execution reliability and optimize resources used. This, in turn, should ultimately reduce the cost of managing these environments.

Also read: What are the most important updates in Java 20?

Virtual nodes and lifecycle management

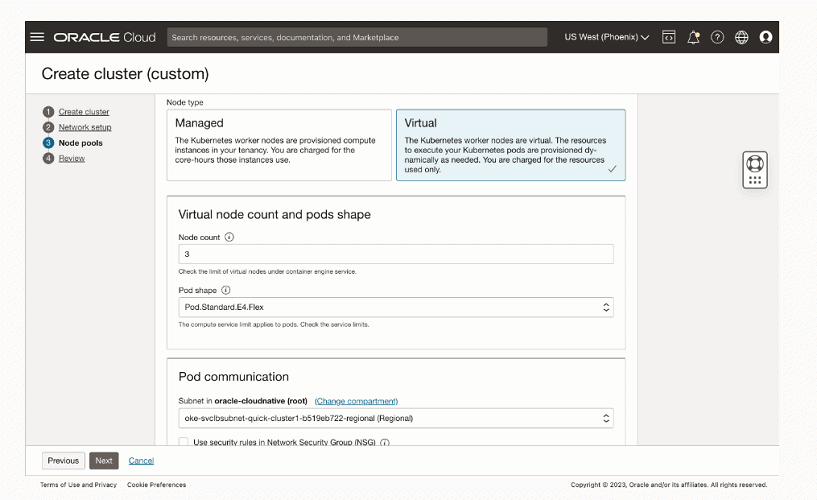

The update comes with serverless virtual nodes. These let enterprises reliably run their Kubernetes-based applications at scale without having to deal with the complexity of managing, scaling up, upgrading and troubleshooting for the underlying Kubernetes node infrastructure. The virtual nodes also need more “elasticity” at the pod level with payment by usage.

Another new featureset of the Oracle Container Engine for Kubernetes involves more lifecycle management capabilities. These capabilities give users more flexibility in installing and configuring the operating system or related applications.

For example, the new lifecycle functionality includes essential Kubernetes software that can be deployed on a cluster, such as CoreDNS and kube-proxy. Also, the new capabilities provide access to other related applications such as Kubernetes Dashboard, Oracle Database Operator, Oracle database and Oracle WebLogic.

The functionality manages the entire lifecycle process for these solutions, from initial deployment and configuration to the entire operational process of upgrades, patching, scaling, “rolling” configuration changes and more.

Security enhancements

Furthermore, the enhancements provide more capabilities for identity and access control of workloads, including at the pod level. The capabilities enhance security policies with better detailed visibility and permissions at the pod level rather than at the node level.

The default settings of these identity and access rules for new clusters are now set to 2,000 worker nodes and also support low-cost spot instances. Financially supported SLAs further cover uptime and availability for Kubernetes API server and collaborator nodes.

Also read: Oracle and Red Hat bring RHEL to OCI