Betty Blocks has introduced a Low-Code AI Toolkit to let companies build low-code applications with AI features faster and more flexibly. This should be possible without affecting security and development speed.

With the Low-Code AI Toolkit and underlying solutions, companies can more easily integrate AI into their (low-code) applications without setting up large complex projects to do so. This should make it easier for them to realize a sustainable AI strategy.

Functionality and use cases

The tool helps users connect various LLM models to the Betty Blocks low-code platform. In this way, users can further use the models’ capabilities in their own applications using the platform’s low-code solutions.

In addition, the tool should give users more flexibility so that they do not experience problems due to changes in LLM models or when they cannot access an LLM model due to new business rules.

Betty Blocks outlines some key use cases for multiple connected LLM models and switching between them. These include their use for specific document searches, automated document summarization, document creation, simplifying complex texts, anonymizing texts and chatbot functionality.

AI Search Hub

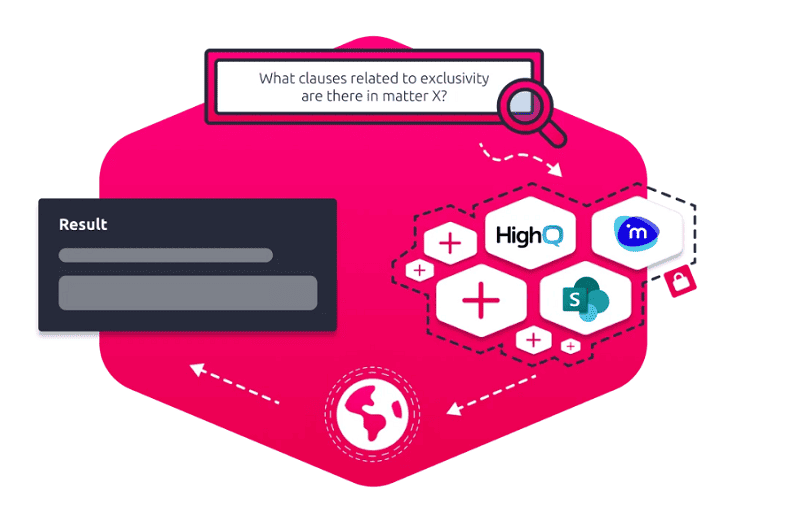

One of the important AI tools from Betty Blocks’ Low-Code Toolkit is AI Search Hub. This solution helps companies with better document search results. Based on LLM models, as well as from Netdocs or Sharepoint, for example, the solution tries to understand the semantic context of a search query and presents a list of relevant documents based on it. These documents are assigned a match rate relative to the search query.

Betty Blocks’ Low-Code AI Toolkit is available now.