While Microsoft has been getting headlines lately for more than one security misstep, the company has now announced a feature that records all PC users’ actions in the form of the Recall capability on Copilot+ PCs. The data remains local, and users can set their own conditions. Still, we’re not entirely reassured that Microsoft fully understands the potential implications.

Many news tidbits were released to the outside world at the Build 2024 conference in Seattle (and online). Think Fabric, Power Automate and Copilot Runtime, for example. Though interesting, the big news came prior to the conference, during an off-site event where Microsoft announced the Copilot+ PC. These PCs are capable of locally running Microsoft’s AI assistant Copilot.

New feature raises eyebrows

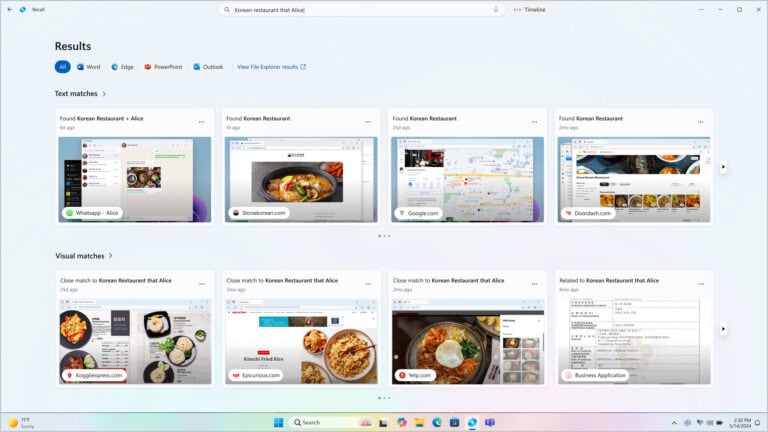

However, the new feature that raised more than one eyebrow is the Recall functionality embedded in the Copilot+ PCs. This means the AI takes a screenshot every few seconds, providing an accurate picture of user behaviour. This makes it possible to ‘recall’ exactly what a user did at a certain date and time. A photographic memory avant la lettre.

The feature is not tied to a particular app or window; it covers all computer usage. Recall requires about 25 GB of storage for a three-month archive by default. Users can customize the allocated disk space and specify how far back in time the archive should go. When the archive is full, the oldest material goes out first.

Convenience is king

The functionality is marketed as a way to easily find out what a user did at any given time. Say you saved a file but can’t remember what folder you put it in, exactly what editing you performed on an image, or when you made a hotel reservation in your browser? Fear not, the Recall feature can look up this information for you.

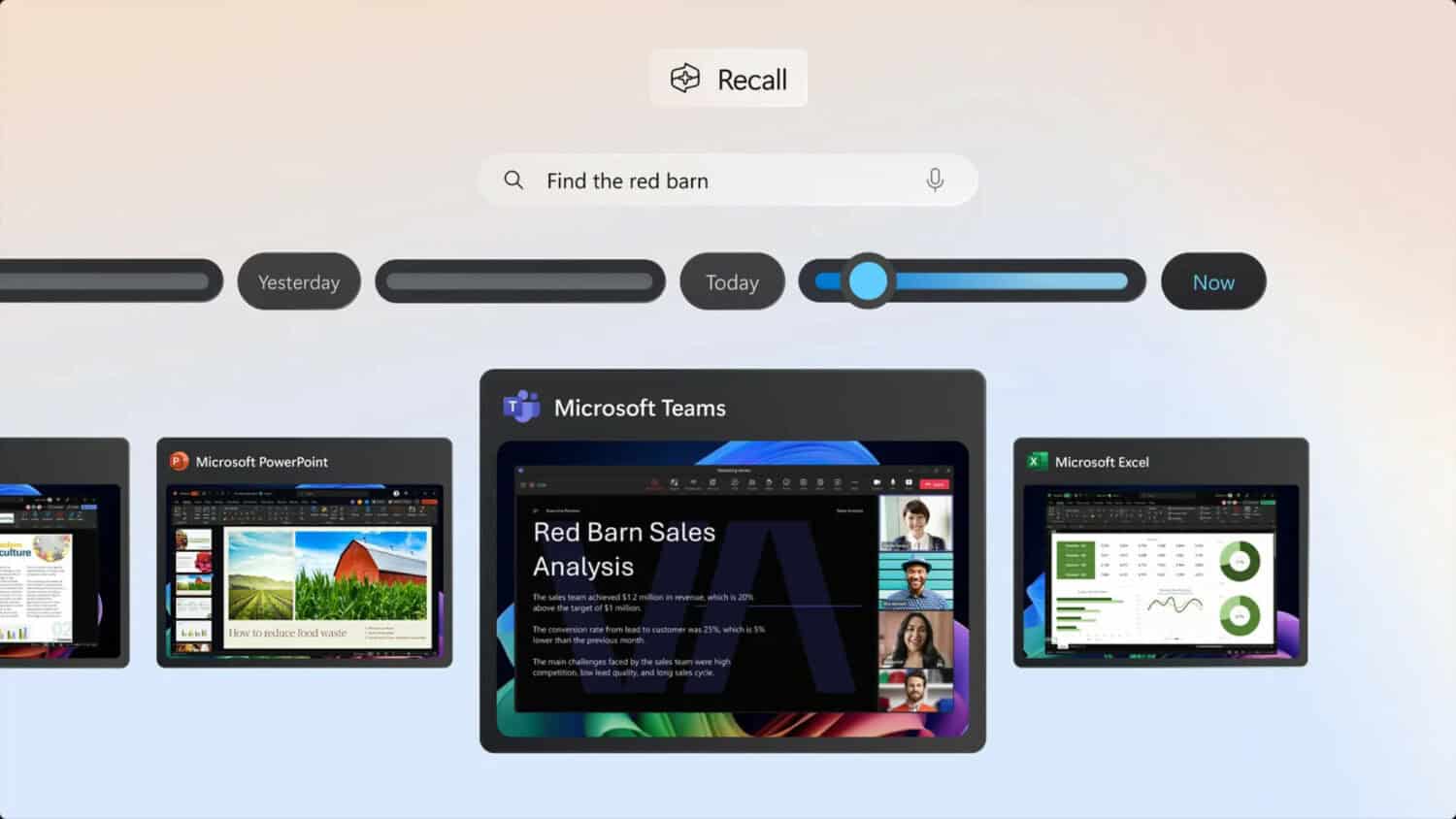

The promise is that this can be done by asking a question in plain human language. For example, Microsoft’s promotional material shows a snapshot where an image of a red barn is used in PowerPoint. By using the query ‘Find the red barn,’ the AI can retrieve from the screenshot and provide context about when the user saw this image and in what programme. Hugely useful.

Ability to turn off Recall

Microsoft wants to nip possible criticism of this rather far-reaching AI-powered sleuthing in the bud by ensuring that users can control where Recall is allowed to poke around and where it is not. The Recall functionality can even be turned off completely. Moreover, the data is stored only locally and inaccessible to third parties.

This did not sound convincing enough for the British government’s data watchdog, the ICO. Spurred on by privacy activists, this body is launching an investigation: it wants to know from Microsoft what security measures it takes to ensure that data does not fall into the wrong hands. In the BBC article linked above, several privacy and data experts are already comparing Recall to stuff seen in the Netflix series Black Mirror, in which the central theme is the catastrophic impact of overreaching tech innovation on people (and humanity in general).

Self-censorship

According to some of them, just knowing that the computer takes a screenshot every few seconds would lead to a form of self-censorship. Especially when working on a computer that belongs to your employer and not to you. Suppose the employer makes the use of Recall mandatory, to what extent does that affect employees’ computer use, they wonder. Surely, work is more stressful knowing someone can retrieve exactly what you were doing on your computer when you didn’t answer your phone at 1:56 p.m. –only in theory, of course.

Perhaps some work PC users won’t even know their boss will be watching their usage, this article rightly suggests. An even more sinister example is given at Ars Technica. Besides the obvious -and rather cliched- example of the porn viewer who is called out due to Recall, this AI-powered photographic memory can pose a serious danger to journalists in conflict zones, dissidents and other ‘enemies of the state’ when their own PC collects evidence against them about the sites they have visited, the documents they have read or the photos they have viewed.

‘A form of spyware’

Some prominent X users, mostly software engineers or tech entrepreneurs, also expressed concerns about the functionality. Some bluntly call it a form of spyware, and despite Microsoft’s soothing words, they point out that such a treasure chest full of screenshots will become a primary target for cyber attacks.

Now, what if your PC falls into the wrong hands, the access code is cracked, or someone gains remote access? And Recall turns out to have saved a screenshot of the document where you keep all your passwords? Recall does not automatically filter out such ‘open’ information. It may be a far-fetched scenario, but remember that not everyone has their security in order as neatly as they should. This means Microsoft makes users more vulnerable by opening up an additional attack vector.

Failed to apply security policies

Perhaps if Microsoft had proven itself to be an extremely solid security partner, this very interesting functionality would have caused less controversy. And therein lies the rub. We recently reported that although Microsoft offers very high-quality security solutions, it sometimes fails to apply these policies itself. We specifically refer to the case in which the company failed to handle certain API keys carefully, allowing hackers to access various Microsoft systems.

Russian and Chinese state hackers managed to penetrate various systems, including source codes of Microsoft products. The Russians from Midnight Blizzard stole email messages between Microsoft and the U.S. government, and the Chinese attackers were able to peek into the mailbox of U.S. and European policymakers –responsible for policy toward China–for up to six weeks.

Microsoft’s leadership was found on several occasions not to adhere to known security best practices. This is painful since the company itself, like other security vendors, insists on measures like MFA and zero-trust. Although the company tried to promote transparency about these intrusions, the information was repeatedly inaccurate. Corrections in disclosures also came far too late and were only implemented at the insistence of the U.S. cybersecurity watchdog CSRB.

Fragmented focus on security

Plenty of attention has been paid to security in the coverage of the Build conference, such as that in the business variant of the Edge browser. Users there can set restrictions on the use of screenshots of sensitive documents or deny Copilot access to certain files, sites, and other Web-based content. Security was also a focus during the announcement of Azure AI Studio, which allows developers to build, train, and refine AI models.

Despite that attention to specific security situations, Microsoft seems to lack a holistic view of the topic. It is a bit like the company is locking all back doors and windows—hah—but leaving the front door wide open. The company seems insufficiently aware of the security risks it may be exposing users to due to Recall now that it is turning their PC into an archivist that can serve up everything they have seen, done, watched, or read.

It’s a tech solution by and for the tech industry, conveniently assuming that every computer user has compliance, privacy and data security policies in place. Reality is just a lot messier, and Recall ignores that.

Still, it might be useful

To conclude, the writer of this article had opened dozens of browser tabs for research purposes. At some point, it was no longer clear in which tab a particular article was located. “Now would be a great time to be able to ask Copilot to search that BBC article with the image of Microsoft boss Satya Nadella,” went through this editor’s head.

So, does convenience ultimately trump security and privacy considerations? Hopefully, that is for each user to decide for themselves. But it would be nice if Microsoft did not make life too easy for hackers, authoritarian regimes, or overactive clerks in any bureaucracy.

Also read: These major computer brands have already announced their own Copilot+ pc’s