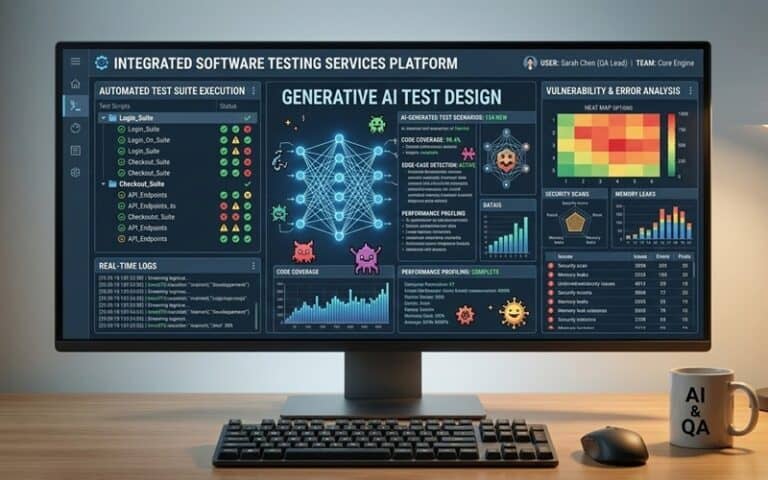

Cloud is for everyone, but not every single thing. This much we know. Generative AI is certainly also for everyone, but (according to the technology evangelists who champion this space), it could potentially be applied to every single thing in terms of every entity in the IT stack. One area that definitely has applicability to is software testing. Primary reasons for this are the fact that it can automate diverse (and often tedious) test cases, generate realistic data flows and accurately predict bugs through is ability to ingest and analyse codebases before it applies human-like reasoning. So what else do we need to know in this arena?

A recent Gartner report forecasted a historic next year for generative AI investment. Global IT spending is projected to reach $6.15 trillion in 2026, a 10.8% increase from last year. As that investment scales, we’ll continue seeing AI play both developer and tester, writing code, generating tests, and shaping product behaviour itself. That said, speed alone doesn’t equate to readiness. As evidenced by the rise in “vibe coding” the greater challenge isn’t whether AI can produce output, but whether enterprises can validate what it produces at scale.

Huge fans of generative AI in software testing are Hélder Ferreira, director of product management at Sembi – a company known for technology that unifies software quality and security solutions… and Bruno Mazzotta, solution engineer manager at testRigor – an organisation known for its plain English, codeless test automation.

AI as a structural layer inside delivery pipelines

“As QA teams increasingly embed generative AI across their workflows, a significant cultural shift is underway in the world of testing: AI is no longer just an accelerator for individual tasks; it’s becoming a structural layer inside delivery pipelines. We’re moving from faster generation to sharper execution, and the QA teams that succeed will be those that prioritise confidence, traceability and risk awareness alongside velocity,” said Ferreira and Mazzotta, in joint conversation with Techzine this month.

In generative AI’s early days, QA teams were just hoping to speed up test case generation. While AI quickly proved it could generate test artefacts in seconds, human testers often found major discrepancies stemming from hallucinations, data biases and misinterpretations of code and logic. In most cases, speed came at the cost of quality and clarity, and testers were left to clean up artefacts that looked correct but lacked context.

Ferreira and Mazzotta suggest that the “illusion of correctness” here is now a broader enterprise concern.

“As AI-generated code and test artefacts enter pipelines more frequently, validation must evolve alongside generation. What holds up in practice is supporting the entire testing lifecycle: AI assisting planning, execution, triage, maintenance and prioritisation while humans retain accountability for what ships. The goal is to create a more intelligent, connected ecosystem that provides full context and flows continuously between phases,” note the pair.

Breaking down how this will work in the real world, Ferreira and Mazzotta offer their proposals for exactly how forward-thinking QA leaders can integrate AI into every layer of the testing lifecycle, including the following four key areas:

#1 Test data creation: AI proposes scenario-based datasets aligned to edge conditions and business rules. For example, generating invoice records with partial payments, expired entitlements, conflicting tax rules, or boundary-date cutoffs. Testers review and approve datasets before use, ensuring masking policies and compliance constraints are preserved.

#2 Exploratory testing: AI suggests high-risk prompts or workflow variations based on recent code changes, such as combining new filtering logic with legacy permissions or stress-testing multi-step user flows. Testers curate the suggestions, prioritising scenarios most likely to expose behavioural drift or unintended edge cases.

#3 Defect triage: When multiple test failures occur after a deployment, AI clusters related failures, highlights shared root-cause indicators and summarises probable regression patterns. Instead of manually combing through logs, quality and engineering teams can focus on validating and resolving the highest-impact issue first.

#4 Context-aware execution: After a code or model update, AI can analyse change history and historical defect patterns to recommend a targeted subset of regression tests. Instead of re-running entire suites, teams focus on the scenarios most likely to expose meaningful risk.

When AI is empowered to operate across the entire system instead of simply optimising individual steps, it becomes connective tissue – linking intent, execution, and feedback. The result is more alignment, rather than more artefacts.

Establishing a quality intelligence layer

“As delivery speeds up, QA teams can’t let themselves become bottlenecks nor friction points. Integrating an end-to-end connection across the lifecycle is essential to ensuring that AI maintains quality, and human testers can support faster delivery,” explain Ferreira and Mazzotta who detail below what a quality intelligence layer looks like in practice:

- Test intent is anchored in a test management system: Preserving why something matters and what risk it covers.

- Execution happens in an automation layer built for resilience: Stable, explainable, and observable.

- AI merges test intent and execution: Translating requirements into maintainable checks, flagging drift, correlating results, and helping teams prioritise what to test next, based on change impact and business risk.

This risk-based prioritisation is where AI’s value becomes strategic. Instead of simply producing more tests, AI can help quality teams decide which coverage matters most after a model update, a code change, or a UI shift.

“When intent, execution, and results live separately, teams lose context and traceability. When connected, AI reinforces signals, strengthening core DevOps principles such as shared accountability, transparent workflows, and faster, informed release decisions. For this model to work at enterprise scale, quality systems must be adaptive and explainable. Acceleration without visibility creates risk; adaptive, explainable automation creates confidence,” clarify Ferreira and Mazzotta.

Prioritising oversight & humans-in-the-loop

As intelligent automation transforms testing, QA teams must ensure that AI remains aligned with business intent and governance standards. While unchecked automation can trigger instability, bias, and compliance issues, human testers can help mitigate these risks by validating AI’s intent and ensuring that quality isn’t sacrificed for more output.

Ferreira and Mazzotta say that human testers can ensure quality by:

- Reviewing AI-suggested test repairs before integrating into the main branch.

- Auditing AI-generated test data to ensure compliance with regulatory standards.

- Overriding AI risk scores when a different release priority is suggested

This isn’t about slowing delivery, say the pair. It’s about preserving decision authority. AI can propose; humans approve. Over time, structured feedback helps AI systems learn what quality looks like within a given organisation, reinforcing standards rather than drifting from them.

Testing AI-infused products introduces additional nuance. LLM features, copilots, and assistants rarely produce identical outputs twice. Validation shifts from static assertions to intent-based checks: Did it complete the task? Did it respect policy constraints? Did behaviour change after the latest update?

In other words, oversight ensures that acceleration does not outpace accountability.

The future of QA: confidence is a key metric

“In 2026 and beyond, the differentiator will not be how much AI an organisation deploys, but how well it governs what AI produces. GenAI will continue expanding across code generation, automation, and product features. But as AI-generated artefacts increase, so does the need for traceability, explainability, and structured validation,” conclude Ferreira and Mazzotta.

Sustainable speed here isn’t about more automation; it’s about the right kind of automation, embedded across the lifecycle, connected to intent, aligned with risk, and grounded in human oversight. If QA can’t trace what was tested, what changed between runs, or why it matters, AI simply accelerates output. If they can, AI becomes something more powerful: an intelligent quality layer that helps organisations ship faster and with confidence.