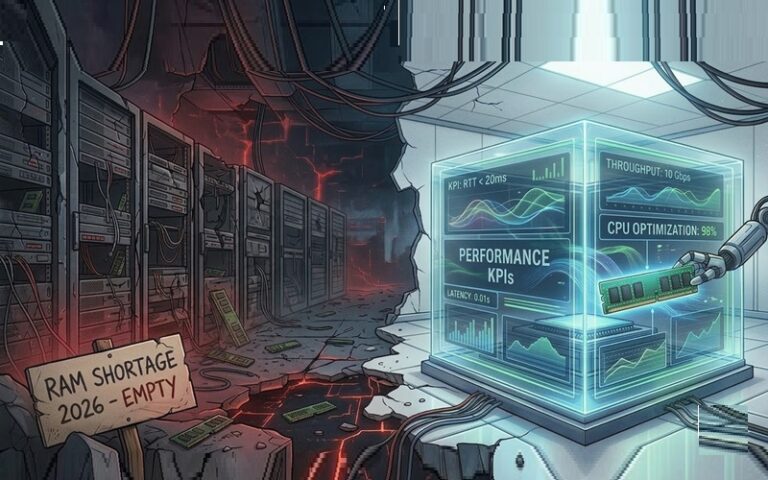

Something is rotten in the state of software development. The birth of what we might call RAMpocalypse came about because hardware is (or was) cheap and programmers are expensive. That means, if an application is sluggish or a memory leak is looming, throw more RAM-based memory at the software product and slap on some faster CPUs and denser storage. But this is not software application efficiency personified, is it? So what should we do about it?

The RAMpocalypse problem is now compounded because hardware’s expensive too, and it might stay that way well until next year. It doesn’t matter whether a developer is building a video game for consoles, a streaming app for phones, a smart coffee machine, a car infotainment system, or a Bluetooth-connected yoga mat. The shortage of semiconductors has thrown a spanner in the works of how every company builds software-defined products.

But a RAMpocalypse doesn’t have to be all doom and gloom, Maurice Kalinowski, director of product management at The Qt Group thinks there are pathways out of the impending blast radius of this cataclysm.

“If rising costs extend hardware lifecycles to the point where businesses are holding onto old hardware for longer, then it might ironically be the thing that encourages developers to rekindle their love for software optimisation. It’s time for software to start doing the heavy lifting. But for that to happen, developers of desktop software (and mobile apps especially) may want to look back to the lean and mean principles of embedded systems,” said Kalinowski,

The prototype dilemma

For Kalinowski, this all comes down to what he calls the common prototype dilemma and it goes something like this.

Teams build a proof-of-concept for a cool great new product… and maybe the concept is really cool. But what do humans naturally do when they’re on the cusp of building something cool? They get excited! So naturally, they reach for the high-level programming language that’s easiest to work with but is heavy on garbage collection.

“At that moment, everything is still fine. After all, a proof-of-concept isn’t designed to meet efficiency standards; it’s designed to show off what features are possible. The problems arise when excitement turns into a rushed production phase. Under pressure to meet a go-to-market deadline, the proof of concept (POC) code, which may be bloated and unoptimised, is simply shipped. Sometimes, that’s just how the cookie crumbles. No software development cycle is perfect, which is why the concept of Minimum Viable Product (MVP)exists. You make the best decisions you can under the circumstances to make sure the product ships with the essentials covered,” said Kalinowski.

The trouble here is, in the RAMpocalypse, developers will need to apply a lot more scrutiny to memory as a Key Performance Indicator (KPI) i.e. If a software stack requires 3.5GB of RAM, but the hardware team can only procure a 2GB module at a profitable price point, there is no longer got a minimum viable product.

Shift left, this time on performance

But we do know that software developers often talk about ‘shifting left’ in relation to moving testing to an earlier stage in the lifecycle. The argument from the Qt man here is that we now need to do the same for performance.

That will mean that performance and memory utilisation will no longer be afterthoughts. They become core KPIs from day one. Mobile and desktop developers, however, have understandably become spoiled by high-end devices. They develop on the latest iPhone or a RAM-heavy Surface, when the end user may very well be stuck with a much older and resource-constrained device like a Pixel 6.

“There’s a page or two we ought to take from the embedded world. Ask any developer who’s worked on embedded systems and they will likely tell you that it’s very common for them to be told that they have a hard cap on resources. They may receive edicts such as ‘You can only use a maximum of 15% of the CPU for the UI’ and that’s an extremely useful discipline for desktop and mobile developers in particular to apply to their workflow,” said Kalinowski.

Don’t wait, act now

The advice is not to wait for users to complain that an app is hogging up resources while Teams and Discord are running in the background. Developers need to set clear thresholds in their development infrastructure that prevent builds from passing if they break resource barriers.

“Your choice of programming language is arguably the single biggest factor in a resource footprint. Lots of people love working with Python because of the convenience and fast iteration it offers. Is it the most performance-friendly? Not really (though there’s been progress on that front in recent years), so if a developer is working with a programming language that does lots of garbage collection, they don’t have the control you need over what’s happening… and that can lead to memory usage piling up. On the other hand, system languages like Rust, C++ and Zig are seeing a resurgence because they do offer that granular control necessary to thrive in a resource-constrained environment,” explained Kalinowski,

That said, he notes that it’s not just about the programming language. Developers have to clamp down early on the technical debt of the entire technology stack. It’s rather like decluttering an attic, sometimes that means making some tough decisions early about what not to hoard. For a developer, that means auditing every library they add to their project for its impact on CPU and memory utilisation.

They need to ask themselves, “Do I really need this whole library, or just parts of it?”

The return of the optimisation expert?

Kalinowski thinks that there’s an argument to be made that all developers should apply these best practices. But when people ask, ‘is software optimisation a lost art?’, he says that maybe the most obvious answer is this: many businesses don’t actually have a domain expert on software optimisation. To weather the RAMpocalypse, this discipline must make its return. We need experts who understand the ‘low-level’ tiny aspects of building software that sum to big savings.

Think about something as basic as this: how do you draw an image? There are so many ways to draw it. A novice might consider a 200×200 pixel image as an insignificant task and just load the asset into memory, but an optimisation expert knows there are various compression methodologies and ways to forward the task to the GPU to save system RAM.

Remember your history, kids

“History gives us a template to work with. Companies like Nokia once had dedicated teams who did nothing but go through software projects and optimise code to make it run faster and leaner. We are already entering an era where that specialised skill set is once again the difference between a profitable product and a failed one,” said Kalinowski.

As we move into the AI era, the temptation to use fast but heavy AI-generated code will be strong. So Qt Group’s Kalinowski concludes by saying that, like every previous hardware shortage, the RAMpocalypse will ease up sooner or later. When it does, he insists that the discipline of software optimisation must stay with us.