Generative AI has hit significant scaling limits. Researchers are attacking this problem in numerous ways, with compression emerging as a fruitful exercise – if done right, anyway. Small language models have long been promised to perform nearly equally to their sizable LLM counterparts, but reality has quickly squashed such notions. Now, the size constraints for both AI data and AI memory are being smashed with TurboQuant, a novel compression technique from Google Research.

TurboQuant centers around a massive six-fold reduction in KV cache size. This is essentially the working memory for an LLM, and it scaling has kept AI researchers busy for years. Expanding context windows have often relied on innovations around KV cache. This has resulted in ever-expanding context windows, giving AI models much more utility with large datasets. In short, it is key to achieving complex, consistently performing AI workloads. On 8 H100 GPUs, attention performance (part of an LLM’s computations) jumps by 8x thanks to TurboQuant’s implementation.

Another ‘DeepSeek moment’

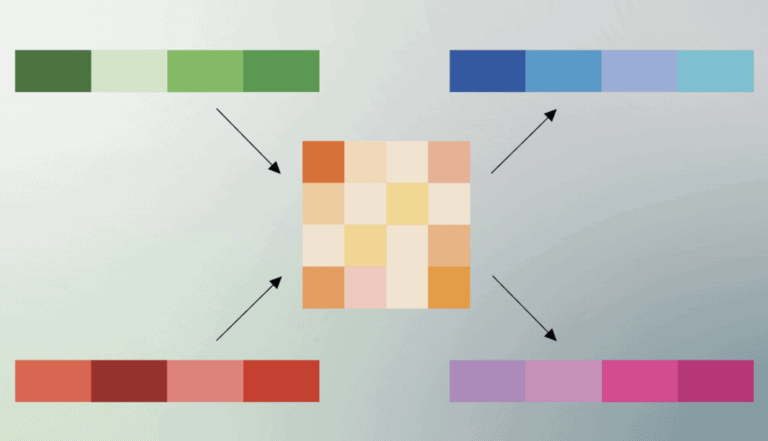

TurboQuant achieves high-quality compression through what the researchers call PolarQuant. This simplifies the data’s effective shape while largely maintaining its meaning. Another step, itself efficiently stored, checks for errors in the earlier compression. Numerous tricks have already proven successful in Google’s research to establish the KV cache data’s integrity.

In short, as put by Cloudflare CEO Matthew Prince, “this is Google’s DeepSeek”. This refers to the breakthrough the Chinese DeepSeek team made with R-1, a reasoning model that performed nearly to the same benchmark level of OpenAI’s then-state-of-the-art o1. However, the LLM was open-source, far smaller than o1 reportedly was, and a successful implementation of complex optimization as well as compression. One key allegation made by American AI labs against their Chinese rivals is that DeepSeek and others are training their models on the outputs of the large, advanced LLMs made by OpenAI, Anthropic and Google. They are said to distill the knowledge in these models, preserving most of the AI capabilities at a far smaller compute cost for both training and inferencing and with dramatically fewer parameters inside the LLM.

Just like parameter count, KV cache is one of multiple factors at play for LLMs. The compression achieved by TurboQuant will likely benefit vector search. This process focuses on finding relevant data stored as vectors. Its practical applications range from recommendation engines to connecting business data to LLMs through RAG. Vector databases have become highly relevant for such use cases and will be traversed far quicker with the breakthroughs TurboQuant presents.

Many gains

Compression is a go-to development for emerging technologies. Just as with AI, previous technological developments have relied on it to leapfrog previous constraints. Despite valid criticism, the JPEG format has allowed images to be compressed enough to make them pervasive even in the early days of the internet. During World War II, voice-based transmissions achieved a 10x compression to hand the Allies secure “SIGSALY” communication.

Across the sweep of history, we’ve thus been here before. In recent times, DeepSeek has even gotten in on the act twice. Following the release of R-1 early last year, the team later announced it had cut training data volumes tremendously by storing large visual documents inside a small number of vision tokens with DeepSeek OCR. TurboQuant is the first publicly released equivalent from the U.S. side of the AI race. Together, they are set to enable enormous efficiency gains for LLMs long term.

Where limits still exist

Naturally, Google’s own Gemini models are bound to benefit from TurboQuant. Online search as well as vectorized Google Drive data will bring faster knowledge gathering to the LLMs as well as a smaller storage footprint on Google Cloud servers.

Bottlenecks inherently shift when developments like these arrive. One puzzle that still has not been solved is the same kind of compression factors in the LLM’s parameters. Small language models continue to perform well below their larger counterparts. Quantization appears to deeply inhibit the model’s performance practically. Perhaps Google’s researchers, or those over at DeepSeek, or somewhere else, will find a similar breakthrough there. If they finally do, the benefits from earlier compressions compound once more. For now, gains are being made elsewhere.