With the new Trainium 2 and Graviton 4, AWS is revamping its chips, while an expanded collaboration with Nvidia will ensure customers have easier access to the most advanced AI hardware. AWS is making the announcements during its own Re:Invent conference in Las Vegas.

The name of the Trainium 2 betrays the purpose of the custom AWS chip: to train neural networks, sometimes even with trillions of parameters. The Trainium 2s are available via EC2, with up to 16 of them in a single instance. AWS says it offers a high degree of scalability by coupling these so-called “Trn2” instances (up to 100,000). An LLM with 300 billion parameters can now be trained within months rather than weeks, according to the cloud provider.

Graviton for all kinds of workloads

Although 2023 is the year of AI, we shouldn’t forget about general-purpose compute. AWS hasn’t either. The new Graviton 4 promises to deliver a 30 percent improvement in raw compute performance across the board. AWS lists some prominent customers such as Datadog, Discovery, Formula 1, SAP, Snowflake and Zendesk that are deploying the Graviton chips for things like databases, analytics, Web servers and microservices.

Nvidia should not be left out

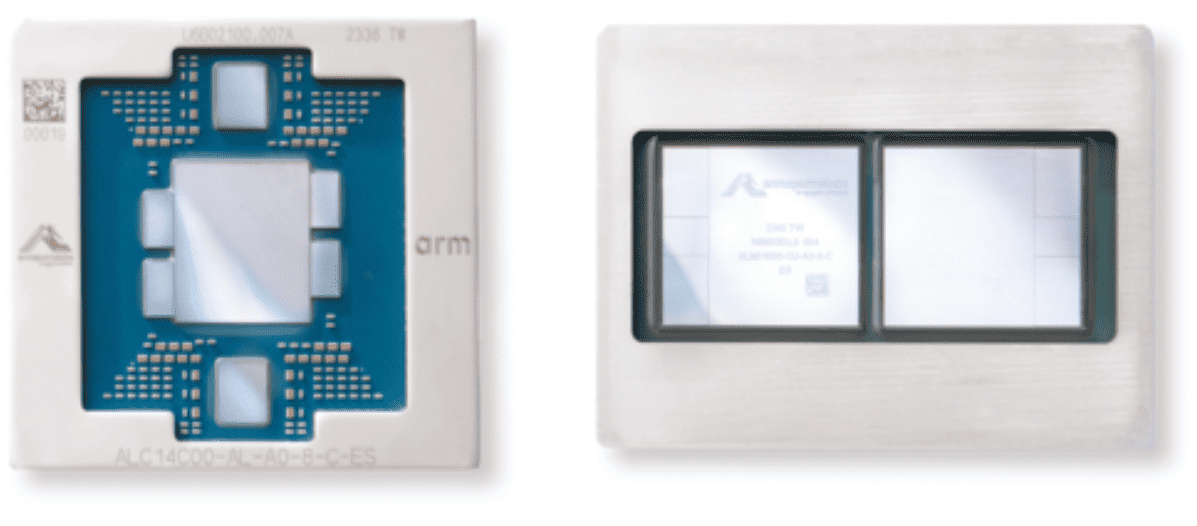

Just as no conference is complete without AI, rarely is an (extended) Nvidia partnership left out of the list of announcements. In this case, the partnership comes with some concrete promises for customers. For example, AWS will be the first service to offer Nvidia’s GH200 Grace Hopper “Superchip,” a combination of the Arm-based Grace CPU and H100 GPU. The latter is currently the most potent option for training and developing AI models, while the GH200 combines its performance with a CPU specifically suited for AI workloads.

Nvidia says it will use AWS itself as a “leading cloud provider for ML research and development,” with the implication that that it’s also the optimal option for other companies with deep wallets as well.

Also read: Salesforce needs AI from AWS to strengthen its offerings