IBM recently introduced its own Vela supercomputer designed specifically for training so-called “foundation” AI models such as GPT-3. According to IBM, this new supercomputer should become the basis for all its own research and development activities for these types of AI models.

The tech giant wants to use the new supercomputer to do research and development work mainly for so-called foundation AI models. These are large AI models that are trained at scale with a fixed amount of unlabeled data. Among other things, via self-supervised learning. This should eventually produce a model that can be easily adapted for different tasks.

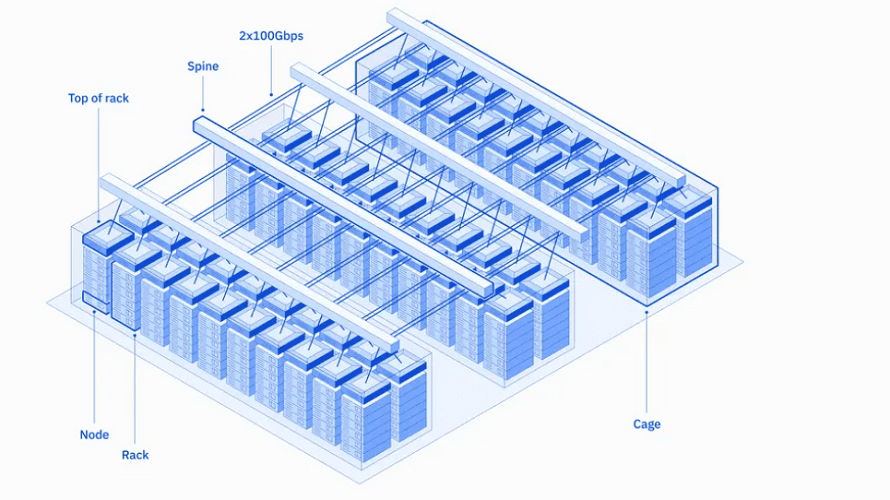

IBM recently announced the existence of the Vela supercomputer, but the supercomputer has been operating in various capacities since May 2022. The supercomputer is built entirely in the cloud. The tech giant sees many advantages to having a cloud-based supercomputer, which does sacrifice some performance, especially in terms of productivity.

Underlying hardware

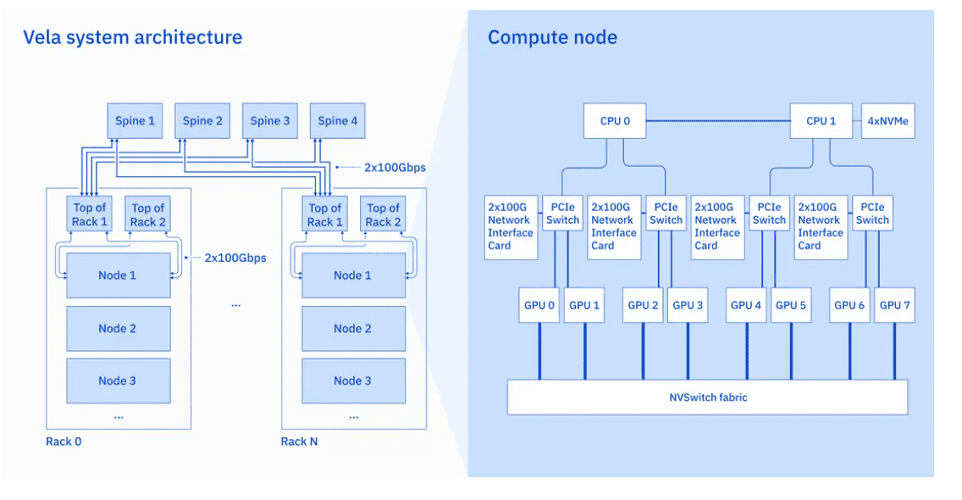

Of course, the cloud-based supercomputer does run on hardware and software. As its hardware base, IBM’s Vela supercomputer uses x86-based standard hardware. This is in contrast to the often specific and also expensive hardware often used for HPC supercomputers.

In the Vela system, each node’s hardware consists of a pair of “regular” Intel Xeon Scalable processors. To this are added eight 80GB Nvidia A100 GPUs per node. Furthermore, each node within the supercomputer is connected to several 100 Gbps Ethernet network interfaces. Each Vela node also has 1.5TB of DRAM internal memory and four 3.2TB NVMe drives for storage.

Open-source software technology

Software-wise, the supercomputer is equipped with a stack of a number of open-source technologies to enable training of foundation AI models. These include Kubernetes in the form of Red Hat OpenShift, PyTorch for machine learning training and Ray for scaling workloads.

In addition, IBM has also built a new workload-scheduling system for the Vela, the MultiCluster App Dispatcher (MCAD) system. This should handle cloud-based job scheduling for training foundation AI models.

Projects for Vela

IBM recently announced its first project for the new Vela supercomputer. In collaboration with NASA, the tech giant will develop foundation AI models for climate science. IBM is also working on a foundation AI model, MolFormer-XL, in the field of life sciences. This model should develop new molecule, for example, in the future.

The supercomputer may also be used in Project Wisdom. This internal project should, among other things, add AI functionality in the form of a natural language interface to Red Hat Ansible. Furthermore, IBM might use the Vela supercomputer for cybersecurity projects.