Microsoft has experimented with various techniques for liquid cooling, but does not yet consider the technology mature enough to be widely used for server cooling in the data centres of its Azure cloud.

That’s what Brandon Rubenstein, Director Solutions Development and Mechanical/Thermal Engineering at Microsoft Azure, told us during a presentation at the recent Open Compute Project Summit, about which DataCenter Knowledge reports.

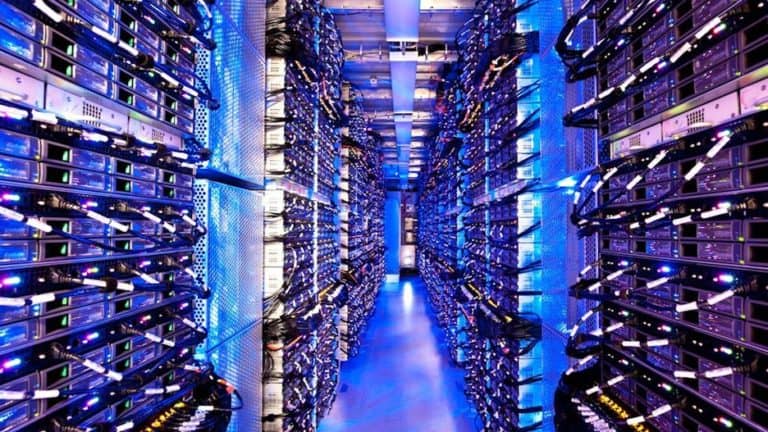

Liquid (or water) cooling is more efficient than air cooling, which can mean huge cost savings on a large scale, such as Microsoft’s global data center operations for the Azure cloud. In addition, components are kept at a more constant temperature and water cooling is better able to cope with possible malfunctions in the cooling system. Humidity, dust and vibrations are no longer a problem. Finally, heat can be more easily reused or resold, and water consumption is reduced.

Necessity

However, these are not the main reasons why Microsoft is looking at water cooling. More importantly, within a few years, the power density of server chips will simply be too high to be kept sufficiently cool with an air-based system.

Processors today typically require 200 watts and that will only increase. For accelerators such as gpus, which are used for machine learning and other specific applications, the requirements are already between 250 and 400 watts. Eventually we come to the point that some of these chips or CPU solutions will force us to use water cooling, Rubenstein knows. However, the time has not yet come for Microsoft.

Experiments

Microsoft experimented with various water cooling solutions for its Project Olympus OCP servers and shared the results during the last OCP Summit. These Olympus servers in themselves are extremely efficient. Thanks to a combination of fans, heat pipes and heat sinks, a 40 kW rack can still be cooled by air.

A first technique Rubenstein and his team tried was to mount microchannel cold plates directly on the double 205 watt Skylake body and memory modules. Two fans could be removed and the speed of the other fans was reduced, so the system used 4 percent less energy for one rack.

Microsoft also experimented with various immersion cooling techniques, whereby servers are immersed in a dielectric fluid that dissipates the heat. In addition, the temperature of the cpus could be reduced by 15 degrees compared to air cooling, when the processors were working at 70 to 100 percent of their capacity.

Standards needed

These liquid-based cooling systems require additional investments in any case, but also have consequences for the ease of maintenance of the servers. Moreover, Microsoft believes that there is still a lack of standardized hardware today.

We need open specifications on DIMM and FPGA modules and dripless connectors and so on, said Husam Alissa, senior engineer at Microsoft’s data center team. We will need certification of components such as motherboards and fiber optics and network equipment, rather than a new experiment every time we want to try something out. We need redundancy of resources for all these technologies and they need to be less proprietary and more commoditised.

Microsoft is not yet ready to choose a particular technology, but realizes that cooling can become a problem within two to three years. Rubenstein expects that liquid cooling at rack level will be sufficiently commoditised within one to two years. However, he believes that it will take at least another five to ten years for complete data centre solutions to mature. That gives Microsoft enough time to find out what the most effective solution is.

This news article was automatically translated from Dutch to give Techzine.eu a head start. All news articles after September 1, 2019 are written in native English and NOT translated. All our background stories are written in native English as well. For more information read our launch article.