AI assistance in writing programming code does not always turn out to be positive. Based on research, overall code quality appears to have dropped since the introduction of GitHub Copilot a year ago.

The GitClear study looked at the quality of generated code one year after the introduction of the AI tool GitHub Copilot. To do this, they examined data from more than 150 million lines of changed code.

Two thirds of this code comes from private parties and one third from open source projects supported by Google, Meta and Microsoft, among others.

In doing so, the researchers examined specifically newly added code, code that has been updated, copied or moved. So-called “noise” or the same code added in multiple branches, “blank lines” and other meaningless lines of code were omitted in the process.

AI assistant not always good

The findings show that AI programming assistance like GitHub Copilot does not always contribute well to the quality of the code produced. The GitClear researchers find that the AI assistant actually only gives suggestions for adding code. Suggestions are never given for updating, moving or deleting code. Among other things, this results in a significant amount of redundant code.

In addition, it would also only suggest code suggestions that developers would likely adopt.

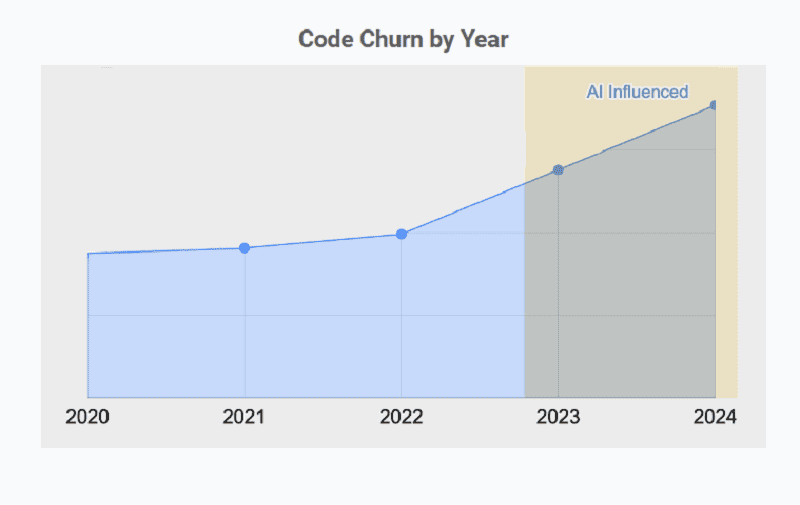

The researchers further note that GitHub Copilot code is primarily added, deleted, updated and copy/pasted. However, the number of instances of code being moved has decreased. They are also seeing a sharp increase in churn code. The latter means that code is frequently modified, usually a bad sign for quality.

Reasons

The underlying reason for all these trends, the researchers put mostly to the use of AI assistants for code. How companies can solve these problems is still the subject of further research.

However, the researchers do urge developers and especially development team leaders to keep a close eye on incoming data and consider its future implications for projects.

Also read: GitHub Copilot Chat makes AI programming assistance even more nimble