Adroitly defining ning itself as ‘the data trust company’, Ataccama has detailed the release of its new Agentic Data Observability technology in Ataccama ONE, its end–to-end agentic data trust platform for regulated enterprises. As agentic AI services now continue to come to the fore, data observability works to help make sure agentic AI receives high-quality, reliable data, which is an essential step in preventing autonomous errors and AI bias. So what’s inside the engineering box now on offer?

Ataccama ONE is described as a unified, AI-powered platform built to automate data quality, governance and master data management (MDM) through continuous, real-time pipeline monitoring to ensure organisations can operate with trustworthy enterprise data.

Who needs regulated data?

The company noted that its technology suits the most regulated enterprises, so that would be firms operating in healthcare, financial services, pharmaceuticals and life sciences, energy and utilities, aerospace and defence, telecommunications, agriculture & food, transportation and logistics, plus also (and you may not have guessed this one) casinos, gambling and gaming.

The release from Ataccama is intended to move its data quality capabilities into pipelines and incident workflows, giving teams a direct path from detection to triage and remediation by unifying observability with automated data quality and governed business context.

It’s about time for accountable ownership

Now claiming to ‘close the gaps left by standalone data observability’, the organisation says it helps ensure the integrity of data powering AI agents and critical business decisions. While Ataccama doesn’t define standalone data observability as such, we can infer this to mean data observability that languishes in the less useful (in this context) independent monitoring space capable of detecting (and provide alerts for) data quality issues related to information pipelines, but not necessarily working inside them.

Unlike standalone data observability tools that stop at alerts, Ataccama links issues to business impact, accountable ownership and tracked remediation in a single workflow.

Data is on a journey

What’s happening here – as enterprises push AI into production – is a state where we can say that data is on a journey through pipelines, transformations and distributed systems… and we need to enact data trust on every stage of that journey.

Data quality issues introduced upstream can spread across the data estate and surface in LLMs, dashboards, risk models and compliance reporting only after flawed data has already impacted the business. Ataccama says that while many organisations can detect anomalies, detection alone fails to answer the questions that matter in triage and remediation, especially in regulated environments.

What we need to know now in the world of data trust for AI is hat is impacted, who owns the fix, whether downstream assets remain safe to use and how to prove resolution later for audit and regulatory scrutiny.

“As data ecosystems expand and AI increases the cost of poor information, observability has become critical to understanding what happens across the pipeline,” Susan Spence, senior data product analyst at SSEN Transmission, an organisation that oversees the high-voltage electricity grid in the north of Scotland. “Yet trust does not come from visibility alone. It depends on data quality as the foundation, enabling organisations to identify issues early, address them before they escalate and protect the decisions that rely on accurate data. Ataccama brings these capabilities together so enterprises can reduce downstream disruption and deliver trust at scale.”

Agentic Data Observability

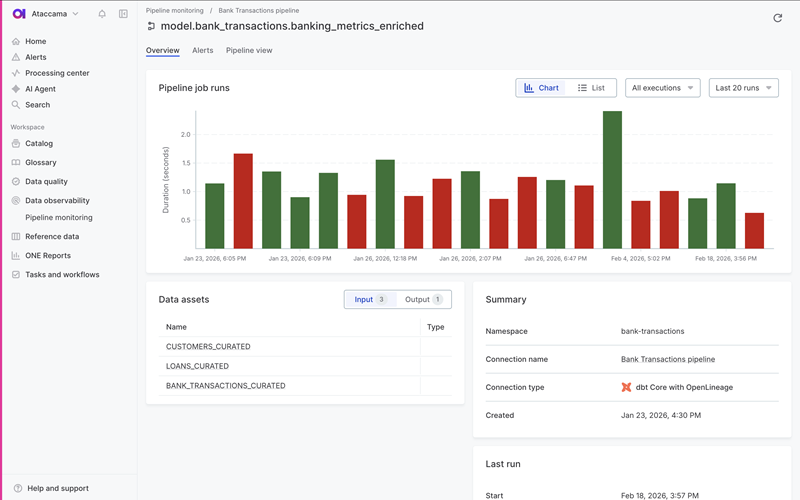

Ataccama introduces Agentic Data Observability by embedding pipeline monitoring directly into the Ataccama ONE end-to-end agentic data trust platform, unified with data quality, lineage, cataloguing and governance.

Powered by the ONE AI Agent, the digital data steward, teams reduce the manual effort that typically slows investigations by surfacing issues with governed context and auto-generating and suggesting where to apply data quality rules. When a pipeline breaks, teams immediately understand what is affected, who is responsible and how to restore data trust before flawed data reaches reporting, risk, or AI systems. In turn, trust between business and data teams remains intact.

Through the Ataccama MCP Server, enterprises extend this trusted foundation to AI tools such as Claude and Microsoft Copilot, enabling them to access governed, validated data from Ataccama ONE.

“Observability has historically stopped at detection, which leaves teams with alerts but no path to resolution,” said Jay Limburn, chief product officer at Ataccama. “That gap forces organisations to spend hours chasing root cause just to determine what broke, what it impacts and whether downstream reporting, risk decisions, or production AI can still be trusted. Detection-only observability isn’t enough when data changes constantly and the cost is compliance exposure, broken reporting, or AI you can’t defend. With Agentic Data Observability, we are bringing monitoring into the trust layer by linking issues to business impact, ownership and remediation workflows. That is how enterprises prove trust in motion and scale AI with confidence.”

Pipeline monitoring

The launch introduces pipeline monitoring and data quality in motion i.e. Ataccama monitors pipelines across popular orchestrators such as dbt, Airflow, Dagster, Azure DataFactory and AWS Glue to detect failures and anomalies during transformation, not after downstream systems are already impacted.

Data engineering teams can reuse existing Ataccama data quality rules across pipelines and persisted datasets, reducing duplicate effort and enforcing consistent standards across the data estate.

Unified alerting here prioritises incidents using governed business context. Data observability signals and data quality rule failures surface in a single alert, with notifications routed through email, Slack, or Microsoft Teams. Governance context, such as critical data elements (CDEs), stewardship groups and business terms prioritises alerts so teams focus on issues that create real operational or regulatory exposure.

Lineage-driven blast radius impact

Lineage-driven impact analysis is here to connect technical failures to business assets. Integrated lineage links issues not only to datasets and pipelines, but also to downstream governed reports and data products. This makes blast radius visible early, enabling teams to triage incidents based on real business impact and prevent flawed data from reaching high-stakes decisions.

Finally (for now), Ataccama says it has incorporated resolution tracking with workflow integration and an audit-ready history.

Straightforward issue management supports a repeatable workflow from detection through investigation and closure. Users can route work to tools teams already use and track ownership directly in Jira, with ServiceNow integration coming soon. This creates an auditable record of what happened, who was responsible for the response, the actions taken and when trust was restored.

Market overview analysis

Ataccama is certainly among the more vocal of enterprise technology vendors operating in the data trust space. Others who would like to claim this crown include Informatica; Collibra (strong on governance); Alation (known for data cataloging); IBM (when isn’t IBM there? … this time with Cloud Pak for Data); Atlan; A modern, high-growth competitor focused on a “Data Control Plane” for collaborative teams using the modern data stack.6. Monte CarloThe leader in Data Observability; focuses specifically on “data downtime” and pipeline health (reliability).7. SAP Master Data Governance; Precisely (more obviously known for data integri) and OvalEdge.

That’s not a magic quadrant or definitive summary, that’s merely a collection of related technologies that goes some way to illustrate how vibrant the data quality market is, especially in the age of agentic AI where we need to know a whole lot about data provenance, data robustness and the ongoing state and health of data pipelines as they now feed AI services.

If nothing else, Ataccama goes arguably further than any of the abovementioned firms in terms of its ability to explain why data trust matters and provide us with a substantive explanation of its toolsets and capabilities, so that may make its approach to data trust rather more, well, trustworthy.