Meta has released Code Llama, a set of AI models suitable for assistance in programming. It is based on the wildly popular open-source model Llama 2. Meta boasts some impressive results from AI benchmarks: is Code Llama the best coding assistant?

In the scientific paper, Meta researchers talk about the rapid evolution that LLMs (large language models) have undergone. A chatbot like ChatGPT can provide stunningly credible answers to all sorts of questions. What is missing there, however, is accuracy: despite the huge number of parameters (reportedly over one and a half trillion), an LLM can make many mistakes. A more compact, theoretically less sophisticated AI model can still be significantly more useful for specific tasks with high-quality data sets.

As of now, Meta’s open-source Llama 2 model is very popular among developers. Other companies repeatedly cite it as a foundation for a variety of AI purposes. For example, organizations can work with Llama 2 at IBM and VMware to train their own model with their proprietary company data.

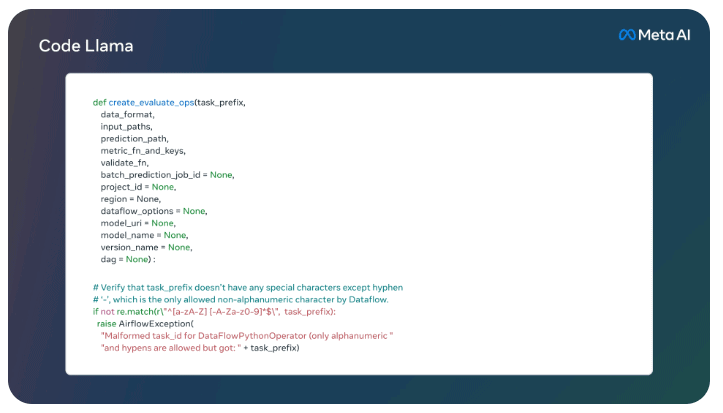

Code Llama itself is a further development of the Llama 2 model, and is specifically trained on programming code and its documentation. Depending on the specific variant, it may supplement, generate or explain computer language based on user input. It may be used just like Llama 2: that is, it’s available for commercial purposes as well.

Three versions, three formats

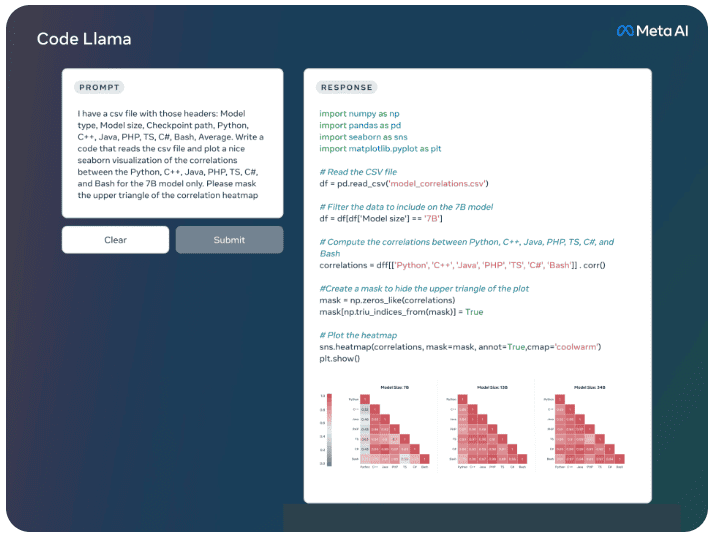

There are three versions of Code Llama: the foundation model is offered with 7, 13 or 34 billion parameters. This allows users to choose which format they can and want to run, because the larger a model, the higher the hardware requirements. On the other hand, the smaller models have a latency advantage: time-sensitive tasks can thus be better performed by them. There is also Code Llama – Python, which specializes in the Python language. Meta explains that this is the most popular language for code generation benchmarks. A specialized tool provides the best results in this regard. Code Llama – Instruct is designed to generate code based on and with human language explanations. It is said to produce code that is more secure than what ChatGPT provides. This is just as well, because with that chatbot, the results in terms of programming were worrisome.

Incidentally, Meta does not recommend that Code Llama be used for purposes other than programming. The dataset it is trained on would make it poor at handling other tasks. It does mean that the Code Llama variants score impressive results in benchmarks. This will be partly due to the LLM’s large context window: 10,000. A token is usually a pair of numbers or letters that the model uses to inform itself about the input. The more tokens, the larger the context window and (in theory) the more focused the answers.

HumanEval, MBPP (Mostly Basic Python Programming) and Multilingual Human Eval are considered popular benchmarks that other LLMs have also been tested against. Against GPT-4, even the largest Code Llama – Python version has to lose out: where OpenAI’s model is 67 percent accurate, Meta’s best LLM gets 53.7 percent. However, a Code Llama model does score highest in the other two benchmarks.

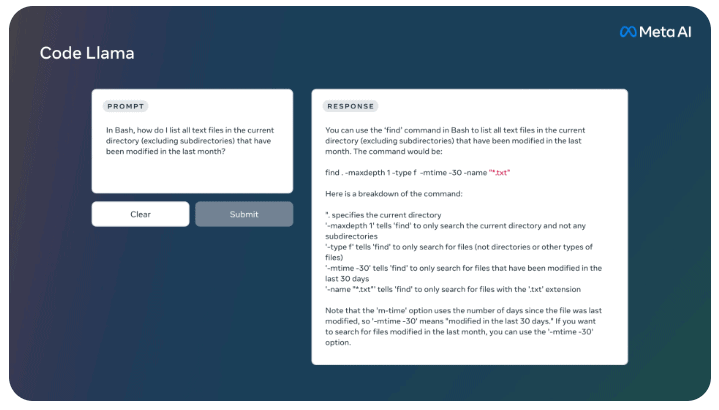

Uses: debugging, educational

As for practical purposes, Meta states that Code Llama is suitable for debugging, completing programming code, generating it and teaching. So it helps programmers with suggestions and corrections, which it can explain in simple language. The company has prepared a Responsible Use Guide to further develop the LLM for even more specific applications.

Also read: Meta’s LLaMA 2 AI models available through Amazon SageMaker JumpStart