Ransomware can strike fast and hard. It is important to be well prepared for it. A Dutch multinational experienced this when it fell victim to the Conti ransomware. We spoke with a spokesman for this company. Take advantage of the lessons he learned.

We hear and read almost daily about the dangers of ransomware. Those reports then almost always come from security solution providers. However, an open conversation with a victim of such an attack is very rare. This is not surprising, of course. Organizations do not like to talk to the press about their cybersecurity. That would only make them more vulnerable, is the general perception. The attackers can also read along.

It’s not clear whether talking about your cybersecurity strategy actually makes an organization more vulnerable. However, we do understand that it’s not exactly high on the list of priorities for organizations. You can’t make as good a name for it in the media as you can with anything around the rather vague concept of “digital transformation”, for example. In addition, many organizations often make major cybersecurity decisions only after something serious has occurred. Of course, that’s not exactly the message you want to get out into the world about your organization either.

However, we believe that sharing cybersecurity stories to a neutral third party like us can improve the cybersecurity of the market as a whole. As a result, we always jump at the opportunity when SentinelOne found a customer willing to talk at length with us about how they fell victim to a ransomware attack in late 2021. The only condition was that we leave the name of both the company and the spokesperson out of the story. That wasn’t a problem, as it doesn’t diminish the impact of the story. The key takeaways of the story are the same, whether we know who it’s about or not.

Multinational company with a diverse landscape

The company that is the subject of this article is a multinational corporation consisting of 15 companies. The spokesperson we speak with is responsible for security. The multinational has a total of about 2,000 endpoints under management and several hundred servers. This gives a good indication of the size of the company.

When it comes to cybersecurity within the multinational, the spokesperson is dealing with a fairly diverse landscape. That is, not only are the companies located in different countries, but not all have the same infrastructure. The level of security also varies between enterprises. While the company is constantly working to raise the level, some of the companies have more ground to make up and take longer to get to the desired level. The intention for the multinational is to deploy new solutions and tooling as widely as possible to all companies, but sometimes that is difficult or impossible from a technical standpoint.

Day 1: Weakest link is the target, but awareness is not immediately there

The above situation had the result that not every enterprise of the multinational was up to date, as the spokesperson puts it. This included the ultimate target of the ransomware attack. This company had no MFA, did not do awareness training and, as is almost always the case, had some vulnerable servers. There was a good backup in place at that company, though, which would prove very important later

The first signal that something was not right came on a Friday night. A monitoring tool reported that servers were unreachable. At that time, however, no alarm bells were ringing because in the preceding period there had been a number of power outages related to maintenance on the power grid in that region. Those power outages were initially seen as the culprit. “We would solve this problem the next day,” the spokesperson indicated the state of mind at the time.

The above reaction may not sound very smart, but it is a very natural reaction, especially when combined with the power outages. This kind of thing happens to an IT department on a regular basis. Without the right tools (which were not available at this company at the time), you simply don’t notice that something is wrong. You can of course try to deal with this by labeling all incidents as a security incident in the first place, but that produces many false positives. That’s not a very efficient way of working.

Day 2: Extent becomes clear, quick response required

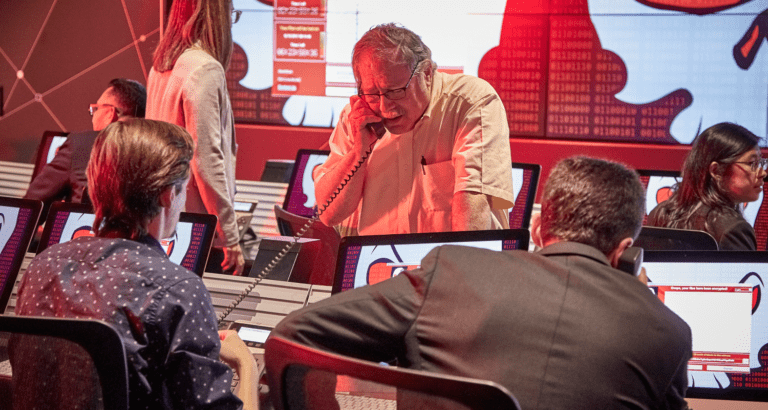

On Friday evening it was not immediately clear that things were not right, but on the second day (Saturday) it was. The spokesperson received a report that day that things were wrong at that one company. All servers had been encrypted by ransomware, which also rendered all business-critical applications unusable. In other words, everything ground to a halt. The situation only got worse in the first few hours after that. One system after another proved inaccessible. It became increasingly clear that the company as a whole had been hit hard.

The moment it is clear that it is a ransomware attack, the first reaction is very important, the spokesperson indicated. For this company, it was the first time they were experiencing it, so no one had experience with it. Nevertheless, they reacted quickly and forcefully. They blocked all traffic in the firewall immediately. In addition, the company set up a crisis team. It is very important to do this as soon as possible, according to the spokesperson. You should also make sure you know who should be on that crisis team. In other words, establish this in advance, at a time when there is no crisis. The profiles on such a team, especially the experience in making (tough) decisions, are also crucial, he indicates. We will come back to this later in this article.

Calling for help, sometimes in vain

After organizing the crisis team, actual steps must be taken to deal with the ransomware. The affected company, however, had a problem. It didn’t use managed services from security vendors yet. That is really important to set up, according to the spokesperson. Most organizations are unable to hire the specialists needed for this themselves.

The multinational was already in talks with a number of endpoint security vendors, including its the-current supplier, to start purchasing managed services. It was soon clear that the current endpoint security vendor had no capacity to help. Formally, there was no signed agreement yet, so that vendor was within its rights to act this way.

The crisis team then decided to contact one of the other endpoint security vendors they were already in discussions with; SentinelOne. The other vendor with whom discussions were ongoing was CrowdStrike, the other usual suspect in the field of advanced EDR/XDR. The choice to go with SentinelOne was financially driven on the one hand, but a POC had also already been done with them. In addition, the multinational tends to choose best-of-breed solutions, not best-of-suite. This is more the case with SentinelOne than with CrowdStrike, the spokesperson indicates.

Less than an hour and a half after being contacted, SentinelOne had set up a Vigilance team (Digital Forensics and Incident Response Team) specifically for the affected company. The company did not have to sign off on all sorts of things first. SentinelOne understood very well that they had to deal with a crisis first. SentinelOne’s Vigilance Team was clearly very experienced in fighting this type of attack and got started right away. Crucially, according to the spokesperson, it was a perfect collaboration and it was actually a pleasant experience in the middle of a crisis. “SentinelOne’s Vigilance team asked the right questions, made the right suggestions, which ultimately gave us a kind of lifeguard feeling. Every step was actually a step forward,” the spokesperson summarized. The fact that good (immutable) backup was available simplified this process.

Day 3: The rebuild

Meanwhile, a roadmap had been created with SentinelOne to get the company back up and running bit by bit. This was quite an undertaking, as basically everything had to be rebuilt. That is, all the servers ran and still run in VMware and the hosts were also down. That means VMware itself was basically gone as well. They had to completely rebuild all the hosts from the ground up.

The rebuild began with restoring the servers that they consider to be preconditional to the affected company’s environment, such as the VMware hosts and after that the domain controllers (VMs). Once a VMware host was back up, the restore of a VM could be done from a backup.

Next, the SentinelOne agent was put on the server. The SentinelOne agent on the server ran in a mode that allowed communication only with the SentinelOne cloud. SentinelOne could then check (remotely) if the server was healthy. This process found traces of ransomware, especially in the first servers that they revived this way. To ensure that this did not cause any further problems, the Vigilance team immediately blocked certain ports and hosts on the firewall. Through those ports, the ransomware communicates with the outside world. Once a server was finally given the green light, SentinelOne included it in its regular 24/7 managed service.

SentinelOne didn’t just take a reactive approach, by the way. They also proactively asked to share as much information as possible. This in order to determine what type of ransomware it was. So they analyzed the log files of the firewalls the company has in place. From those files, SentinelOne fairly quickly identified the so-called beacons for the attack. In addition, SentinelOne also wanted an image or at least access to one of the company’s encrypted servers. Obviously, that was not the focus of attention on day two, but the company was able to provide it by the end of day three, on Sunday evening. Based on all this information, SentinelOne determined that it was the Conti ransomware.

Day four and beyond: rebooting step by step

By Sunday evening, when the company sent the image toward SentinelOne, it was already pretty far along with the reboot. Things like domain, mail and file servers were already back up and running, for example. So the spokesperson sees Sunday night/Monday morning as the turning point. That’s when they got the first critical applications online. Then, one by one, the most important applications came back online.

Mind you, the company was still not operational at that time. For that, it had to wait until Tuesday. That’s when the systems the company uses to take orders came back on line. That is part of the core of the multinational company’s operations and, therefore, of this specific company. One advantage of this particular company is that it doesn’t actually do much business on weekends. This means that the ransomware attack only really affected its business for 1.5 days. That’s fortunate. Fully recovering from the attack did take an extra couple of weeks, by the way. That’s the time it took to get all the servers back up and running in a secure manner.

It is important for almost every organization to recover from a ransomware attack as quickly as possible. The company we discuss in this article it was really about survival, the spokesperson indicates. In the segment it operates in, you can be down for a week at most. After that, it becomes very difficult to maintain market share. That is also why the decision-makers within the multinational kept the lines of communication open with the Conti group. That is, the option to pay has also always been on the table. It is easy to say that you should not pay. When the survival of your company depends on the decisions you make in this respect, it isn’t as easy to follow through on that statement.

Important lessons learned

All in all, the multinational was operational again after a few days. The attack was repelled and the survival of the company was assured. After such an attack, however, it is also important to understand the lessons that we can learn from it. These lessons, which are also relevant for other organizations, are listed in the remainder of this article. You could think of this as a comprehensive conclusion.

Lesson 1: Get and keep the right mindset

When we ask the multinational spokesperson at the end of the interview what he and the organization as a whole learned from it, the first thing that comes up is the term “mindset”. It has fundamentally changed throughout the organization. Cybersecurity initiatives have now gained momentum.

Now the key is to maintain this state of readiness. It is ebbing away now, the spokesperson also notes. That is why security awareness training has been firmly put in place. Not everyone feels like it, but the events of late 2021 have been a lesson for the company. So everyone will have to do it, one way or the other.

Lesson 2: Set up an experienced crisis team

A second important lesson is that the composition of the crisis team is critical. Had they not had such an experienced team, things would have ended differently, the spokesperson states. Mind you, SentinelOne’s Vigilance teams was a crucial part of this crisis team. “Without SentinelOne, we would have run into a second attack in no time,” the spokesperson states. With the team as it was now, every step was a step forward. Everyone constantly felt that things were moving in the right direction.

The most important quality that at least several members of such a team must possess is that they have experience managing crises and can work well with the specialists from an external team, in this case the SentinelOne Vigilance team. So practical experience, not just based on training. Training is always better than no training of course, but in practical experience is still fundamentally different.

Lesson 3: Basic hygiene helps a lot to prevent attacks.

SentinelOne is one of the most advanced cybersecurity players in the world today. The company also certainly did a great deal to help the company under attack. Still, good security starts somewhere else, with the basics. That means implementing MFA everywhere, updating and patching servers and systems, and having security awareness training. In doing so, they also address people who don’t participate. Not committing to security awareness training isn’t an option anymore. In addition, backups across the organization need to be ransomware-proof. Finally, it is good to focus strongly on SSO and password management.

In short, it is not rocket science, to use the words of the spokesperson. For example, most organizations already operate Active Directory or Azure Active Directory. So take advantage of the MFA capabilities, the spokesperson gives as an example.

Lesson 4: SentinelOne is truly next-gen

A final lesson that the spokesperson and the multinational as a whole have drawn from the experiences surrounding the ransomware attack is that SentinelOne is not just next-gen on paper. It really is next-gen in practice too, the spokesperson indicates. Initially, of course, they didn’t have that much choice, but SentinelOne has since been rolled out at all companies within the multinational. That went completely flawlessly and without any issues in just a few weeks. The SentinelOne agent does not have any negative impact on the functioning of applications, for example.

On top of that, thanks to SentinelOne’s SaaS environment, management is child’s play. Not only because he now no longer needs an on-prem server, but also because he could now essentially do the management himself for the entire group of enterprises. That’s how easy the SentinelOne platform is to use in practice. Asked about negative points of SentinelOne, the spokesperson simply can’t think of anything. He even goes as far as saying that despite the affected company’s dated environment, they probably would have repelled the attack with SentinelOne.

SentinelOne did not only help the multinational company recover from the attack. It also dramatically reduced the attack surface for a subsequent attack. The multinational also started to use SentinelOne Ranger very quickly after the attack. That tool scans everything that happens on the network and then reports which unprotected endpoints it sees. They used this tool regularly during the crisis.

Finally, it is worth noting that SentinelOne also provided a comprehensive report after the attack was repelled. That report was intended to provide as much insight as possible so that the company could learn as much as possible from it. Such a report, according to the spokesperson, contains advice you can actually do something with, including a full Attack Diagram. This type of after-sales coupled with the platform’s next-gen capabilities and excellent pre-sales (helping even before there anyone signed anything) leaves a good impression of SentinelOne in general. More importantly, it has made the multinational a lot safer in the broadest sense of the word. In the end, that’s what matters most.

Read more: Should you want to read more about SentinelOne or cybersecurity in general after this longread, we have a few more articles for you. This link will take you to the article we wrote about exactly what SentinelOne does and how it does it. Via this link you will learn more about how to interpret the results of the well-known MITRE ATT&CK evaluations.