Amazon has powered up a series of new servers, ready to be rented out to customers. De servers are equipped with Nvidia A100 GPU clusters, powered by Intel Cascade Lake processors.

The servers carry the P4 name and are the successors of the G4 generation. They each consist of eight Nvidia A100 Tensor Core GPUs, connected with NVLink bridges. Together, the cards have 320GB of graphics memory.

To complement the cards, Amazon equips the servers with 48 Intel Cascade Lake cores (96 virtual cores), 1,1TB of system memory and 8TB of SSD storage. In terms of network connection, the servers can reach speeds of up to 400Gb/s.

UltraCluster

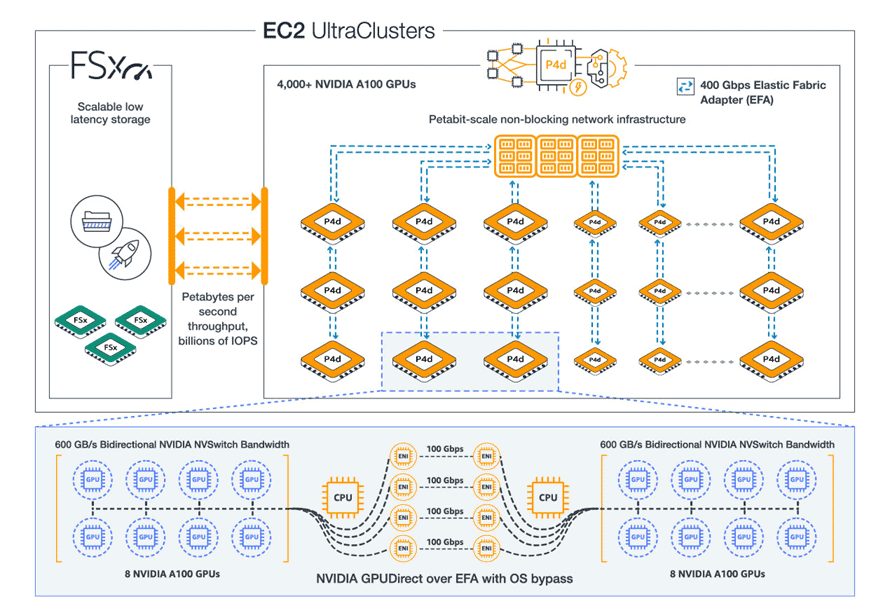

The servers can work together in large clusters, which AWS calls EC2 UltraClusters. With this, over 4000 GPUs can collaborate to essentially create a supercomputer. These clusters can be rented in parts.

Machine Learning

Amazon promises that the servers are up to 2.5 times faster in deep learnin tasks than the previous generation, making it about 60 percent cheaper to train a model.

This is in line with the performance recently shown in the MLPerf benchmark. This showed that the Nvidia A100 CPU is several times faster in machine learning tasks than its predecessor, the Nvidia T4. AWS used that CPU in the G4 instances.

Pricing

If you want to rent a P4 agency, you’ll have to shell out $32.77 (€28) per hour. Lower prices are available for longer rental periods: $20 (€17.09) per hour for an annual subscription or $11.57 (€9.89) for a three-year subscription.