Over a year ago, a little-known Chinese AI lab by the name of DeepSeek caused an intense stir. Its reasoning model R-1 was competitive with the best LLMs from OpenAI, Google and Anthropic while being open-source and enormously efficient. Now, with V4, the team has once again delivered. Long-context agentic workflows are suddenly cheaper to run, and AI adoption thus becomes just that little bit more attainable.

It’s important to stress just how different the generative AI field looks now compared to early 2025. When DeepSeek released the R-1 model, its reasoning version of V3, complex LLM thinking steps were quite new. OpenAI was running GPT-4o for general queries and o1 for heavy inferencing tasks. Claude’s 3.5 family was Anthropic’s latest offer and Google had only just come out with Gemini 2.0 Flash, still using 1.5 Pro as its flagship.

Fast forward to today, and many AI challenges have been conquered. DeepSeek R-1 has been thoroughly outperformed by proprietary rivals such as Gemini 3(.1) in all its forms, Claude is up to 4.6 for Sonnet and 4.7 for Opus. More importantly, this generation of models is both more efficient and enormously more capable for agentic use cases, situations in which LLMs are acting upon IT systems beyond merely ingesting information from it. It is within this context that DeepSeek once again finds a niche to holistically reset economic expectations for AI users.

The costs keep falling

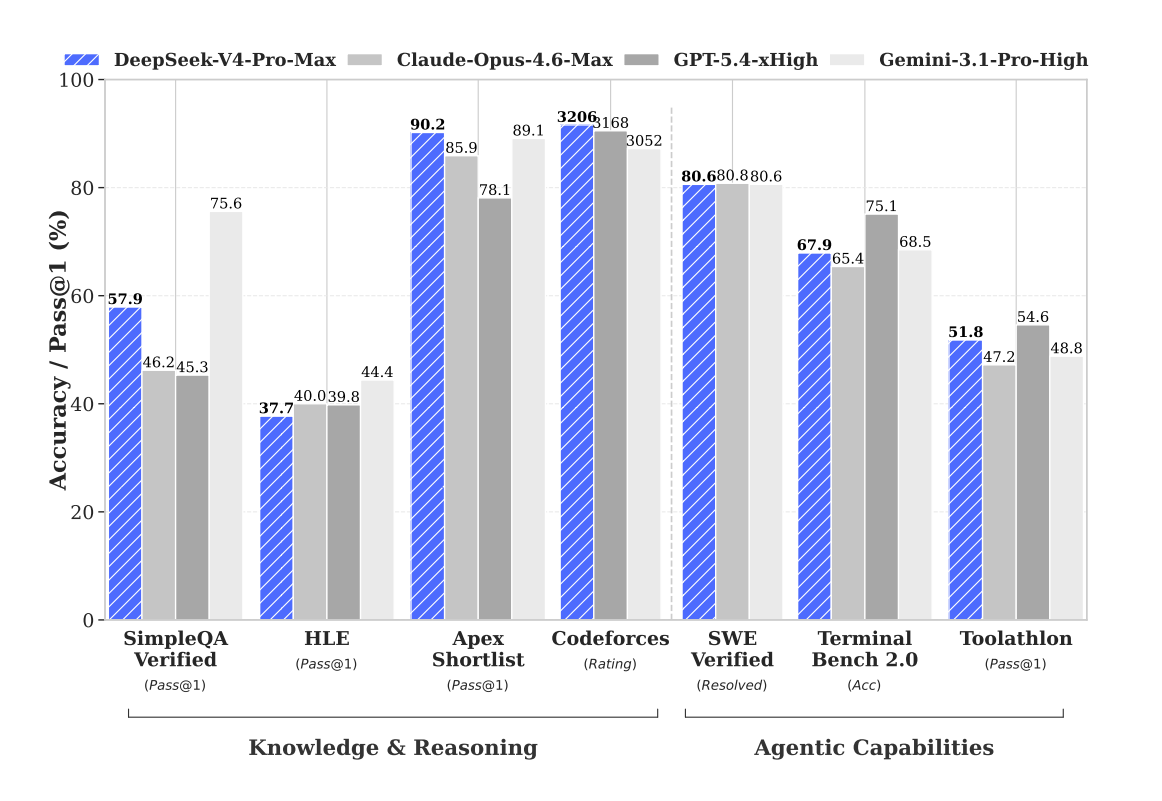

In a 1-million-token context scenario, DeepSeek-V4-Pro requires only 27 percent of the computational power and 10 percent of the KV cache, the short-term memory AI models use, compared to DeepSeek-V3.2. More critically, every benchmark to check the new model’s capabilities and knowledge put it at or above the state-of-the-art. Admittedly, Claude Opus 4.7 was not included, and neither was the just released GPT-5.5 for obvious reasons. Nevertheless, only Gemini 3.1 Pro at high reasoning settings comprehensively beats DeepSeek V4 in one benchmark (SimpleQA Verified).

If we are to take these results at face value, one should expect coding performance at about Opus 4.6 / GPT 5.4 level, but in an open-weight format. This means you could technically run the model yourself if you have 800 GB of RAM to spare. While the DeepSeek team omits specific economic considerations, on the right hardware, you’re going to benefit tremendously if V4 does the agentic job Claude and GPT did before. Some napkin math tells us a complex agentic loop that may have cost you 10 dollars before, could now drop down to just 1.50 or 2.50 dollars. This all depends on the length of the context, input and output; both OpenAI and Anthropic heavily burden users financially if they go beyond certain context lengths (272K for OpenAI and 200K for Anthropic).

The Chinese chips

The fact we got another DeepSeek upset needn’t be all that surprising. DeepSeek OCR flew under the radar a little, but its enormously efficient image processing solved some key pain points around the costs of such AI use cases. Now, V4 targets agentic workflows, another recent development that has ramped up costs quickly. Behind the scenes, though, DeepSeek has had some significant hurdles to overcome itself.

Its deep knowledge of Nvidia’s chip logic allowed DeepSeek to train and run R-1 in early 2025 at a tremendously cheap rate for its capabilities. Nowadays, it is relying on a combination of Nvidia and Chinese-built Huawei Ascend NPU chips to get the job done. Training was completed on a mix of the two, while V4 is heavily optimized to run on Huawei’s Ascend processors.

This is a big upset. For the first time, frontier-level LLM performance is not only possible on hardware built by a non-US manufacturer, but preferred. We are no financial analysts and market reactions to AI developments often flummoxed us, but one should not be surprised if Nvidia and other US firms take a beating in the DeepSeek V4 aftermath. Then again, as mentioned before, cheaper agentic AI economics don’t mean you need fewer chips. Let us recall Jevons paradox, famously cited by Microsoft CEO Satya Nadella just after DeepSeek-R1 was released, which tells us that a more efficient emerging technology may yet increase total consumption rather than decrease it.

Technical breakthroughs

Regardless of the outcomes for AI labs and hardware players, DeepSeek V4 presents some tremendous technical breakthroughs that are worth discussing. First of all, the extremely low KV cache size decimates DeepSeek’s previous best effort. V4 does this by utilizing what’s known as an interleaved hybrid configuration of Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA). What this actually means is that the tokens are compressed at different rates depending on the level of attention V4 ‘thinks’ it needs to apply to every bit of information.

In addition, DeepSeek published research before that involved knowledge passing through the AI model itself more intelligently. This routing, known as mHC (Manifold-Constrained Hyper-Connections), was explained by the Chinese AI lab at the start of the year. V4 is the first model to use it, bringing tremendous efficiency gains.

Conclusion: an unsure footing

The previous DeepSeek-infused stock market debacle tells us the reaction to Chinese-built models can drive negativity around AI. However, it seems pretty evident that the gains achieved here will lead to agentic workflows becoming more viable, thus leading to expanding use cases for the technology thanks to basic economics. In addition, the extensive research and open nature of DeepSeek’s work must once again be applauded. If there’s some secret agenda on the part of the model, V4’s advances can trickle down to LLMs from model-makers considered ‘safe’ by the Western world.

For the moment, though, it’s up to the competition to prove that proprietary models can once again trump DeepSeek’s open-weight challenger.