MobileLLM was conceived for smartphones, which have limited computing power and energy resources. The model is developed by researchers from Meta and PyTorch.

The new LLM resulted from optimizing several small models. These models contain less than one billion parameters, making them suitable for devices with limited computing power, such as smartphones.

When new models are released, the number of parameters used is always heavily emphasised. This is included to indicate the model’s performance, where a larger number of parameters would yield faster and more detailed results. For example, a model like GPT-4 is estimated to contain more than a trillion parameters.

New approach

MobileLLM was developed through a collaboration between Meta Reality Labs, Meta AI Research (FAIR) and PyTorch. MobileLLM is just one illustration of a possible approach to developing LLMs for smartphones. Interested parties can use the results and insights to develop their own in-depth and energy-sufficient models. Meta released the training code for such purposes.

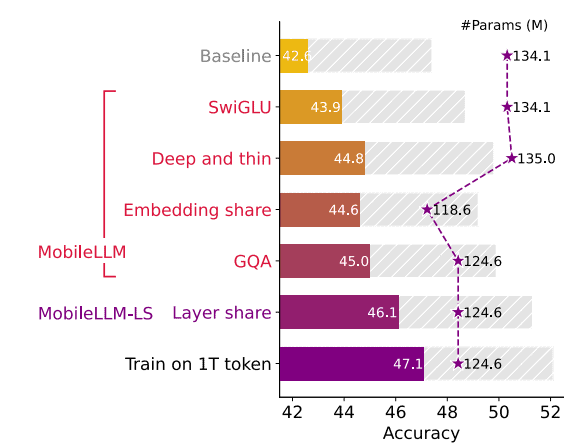

New studies can combine the new insights to improve this type of LLM again. MobileLLM delivers improvements of 2.7 to 4.3 percent, according to benchmark tests comparing it to previous models of the same size. Those are significant improvements, but there is plenty of room for future innovations.

Performance rivals billion-dollar LLMs

For zero-shot common sense reasoning tasks, the model tested with 350 million parameters achieved accuracies close to those of the LLaMa2 model, which consists of 7 billion parameters. The following table shows the results; the performance of the model with 350 million parameters can be found in the grey dashed bar.

Two important findings in MobileLLM can guide future research. First, it was found that model performance improves when focusing on depth rather than size. In addition, weight-sharing techniques appear to provide significant storage benefits.

Tip! Anthropic launches initiative to develop better benchmarks for LLMs