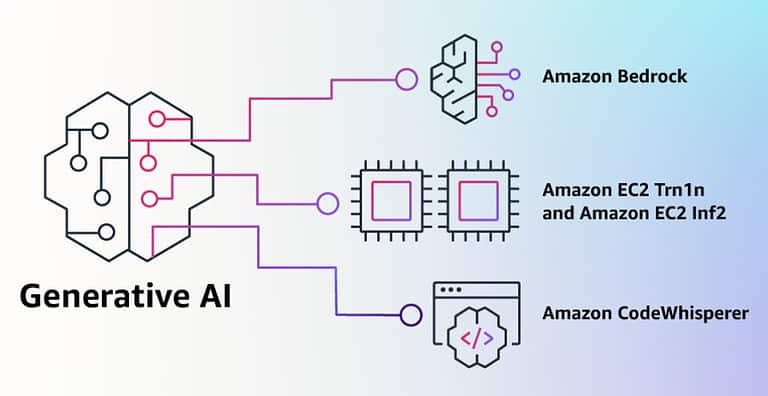

Amazon has so far stayed out of the generative AI discussion. Everyone was, of course, waiting for a response on generative AI from AWS. Today, with Amazon Bedrock, AWS introduces a new tool that gives developers access to multiple generative AI models. Applying generative AI within applications should be easy with Amazon Bedrock.

Amazon itself states that it has been involved in AI and machine learning for 20 years. With that, it is unthinkable that Amazon would not get involved in the world of generative AI. However, AWS is choosing a different route than Microsoft and Google. Those companies are primarily busy adding generative AI to their own existing applications to make them more innovative and better.

AWS provides developers with tools to apply generative AI to their own applications

AWS is providing developers with tools to add generative AI features to their applications. This in itself is not a surprising choice, as AWS has been providing developers with all kinds of AI and machine learning (ML) capabilities for years. Whether it is building and training their own machine learning models with Amazon Sagemaker, or analyzing images, converting speech to text or text to speech. These are all AI-based solutions that AWS has offered for years. Now it is adding the tools to add generative AI to the applications that developers are building. However, AWS is leaning on multiple models for this.

Also read: Generative AI: How can GPT-4 shape the corporate world?

The foundation models

Generative AI allows you to create new content, whether it’s text, images, videos, music or even programming code. Creating that new content requires very sophisticated ML models. If a text or an image about a bicycle is to be created, the model must have some idea of what a bicycle is, what a bicycle can do and what it looks like. That kind of source information must be provided by the ML model, these models are trained with vast amounts of data. These models also bear the name Foundation model (FM). It is the foundation of all the content you can create with it. In 2019, the largest FM consisted of 330 million parameters. Now, we have passed the 500 billion parameter mark, which is 1600x larger in just four years.

With this increase in size, the models have also improved and can generate text or images with a very high quality. They can perform very complex tasks that were previously unthinkable. The way you can already use ChatGPT, for example, is already pretty powerful. However, there is an easy way to make it even more powerful. Generative AI becomes very powerful when you add your organisation data on top of the model. We already saw this with Einstein GPT, where Salesforce can combine data from sales, service and marketing solutions with OpenAI. This allows a customer service representative to quickly answer a customer question. It can answer with specific information from the organisation’s knowledge base and in the organization’s writing style.

Amazon Bedrock gives developers access to multiple foundation models

With the introduction of Amazon Bedrock, AWS offers developers direct access to the APIs of several foundation models. These include models from AI21 Labs, Antropic, Stability AI and several of Amazon’s own foundation models.

At AI21, the main focus is on reading and writing text so that the large language model (LLM) can better understand text and write better text. With the Jurassic 2 model provided by Amazon Bedrock, you can generate texts in English, Spanish, French, German, Portuguese, Italian and Dutch.

Anthropic is developing an AI that is good at conversations and performing tasks. This can be in the form of a personal assistant, but also a chatbot to which customers can send their questions. Or a chatbot that guides the customer through a complete return process.

Stability AI focuses on creating images based on text or based on a photo. Suppose you want to refresh the interior of your living room, you can offer a picture of your current style, and based on that, Stability AI’s model will come up with similar pictures in which that style is further perfected and which can offer you the inspiration to refresh or renew your living room. You can also ask, create an image of a country, modern or industrial living room, but that is based on less information.

Customizing the models with Amazon Bedrock

What Amazon Bedrock excels at is the customizability of a foundation or large language model. According to Amazon, it’s a matter of putting labelled datasets into an S3 bucket and linking it to Bedrock. Bedrock can then easily combine the model with the supplied data in those linked datasets. There is no need to train the model to do this.

For example, an example that Amazon itself gives is a dataset with about 20 items. For example, a marketing employee creates a list of the best slogans from previous marketing campaigns in recent years. Combined with a list of new products, product descriptions and product photos. Bedrock can then use this information to generate a completely new marketing campaign with social media posts, online banners, articles for the blog and advertising flyers.

In the end, Bedrock uses the customer data and the available models. However, these remain separate; the data never becomes part of the model. That would take far too much training, besides, this is customer data, and is always confidential. AWS also states that customer data always remains in the customer environment.

Amazon Bedrock is available as a limited preview

AWS has now presented Amazon Bedrock, but general availability is still some time away. A few selected customers have started working with it. Based on their experiences, the product will be further developed and improved where necessary. Especially with generative AI, it is good to test things in advance and sometimes add some limitations to ensure that the AI behaves as desired. We have already observed some undesirable behavior at Bing and Google. AWS expects GA to be in the second half of 2023.

Tip: GitHub Copilot X: productivity aid or a threat to developers?