Cisco is coming out with two new SiliconOne processors. The processors should form the basis for an AI or machine learning (ML) infrastructure.

The two new Cisco SiliconOne processors, the 5-nanometer 51.2Tbps SiliconOne G200 and the 25.6Tbps G202 recently became part of the larger SiliconOne processor family. The Cisco processors allow single chipset customization for routing or switching. This eliminates users’ reliance on a separate processor architecture for certain network functions. The processors come with their own operating system, P4 programmable forewarding code and an SDK.

The new products are designed for network enhancements to facilitate and facilitate the delivery of AI and ML workloads or other highly distributed applications. The processors mainly target large enterprises and/or hyperscalers.

Technical functionality

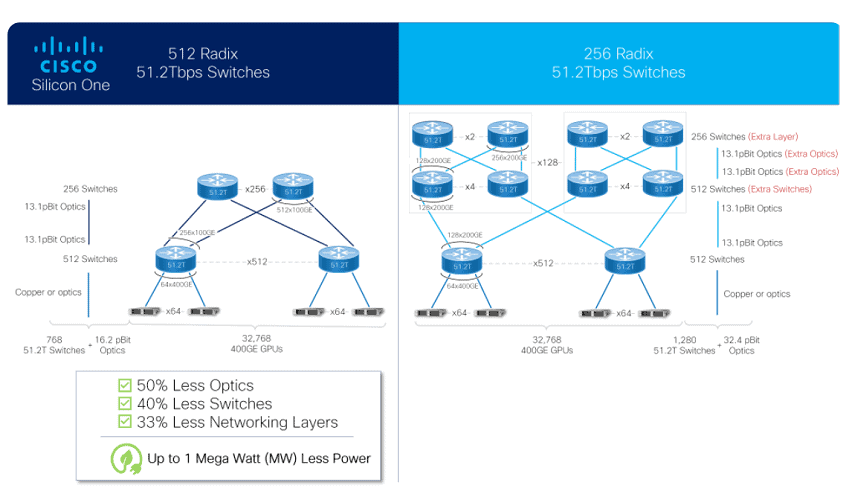

The new processors feature a P4 programmable parallel-packet processor that delivers more than 435 billion so-called lookups per second. They also support up to 512 Ethernet ports. This allows users to build a 32K 400G GPU AI/ML cluster. This cluster can reduce the required switching capacity by 40 percent, Cisco claims.

Other features include advanced load balancing functionality and “packet spraying. The latter technology spreads data traffic to multiple GPUs and switches to prevent congestion and latency. Furthermore, functionality such as hardware-based link-failure recovery has been added, allowing networks to operate at peak efficiency.

Scheduled Fabric

All this functionality should eventually lead to what Cisco calls a “Scheduled Fabric,” according to Cisco. In a Scheduled Fabric, the physical network components, processors, optical links, switches, are interconnected in a large modular chassis. This allows the elements to optimally communicate with each other to achieve optimal scheduling behavior.

The new Cisco SiliconOne processors are currently being tested by selected customers.

Read more: Cisco presents Networking Cloud: what is it and what’s in it for you?